Self-driving or autonomous cars promise a change in patterns of mobility more radical than any change in transportation. While depending on maps, the maps made for self-driving cars are perhaps unlike any other: not made or designed for human eyes. To be sure, we already drive in maps that we see ourselves moving along, in a mapped world as much as a real topography-and the internalized maps on our dashboards offer a basis from which it seems one barely has to move to imagine a machine-readable map of a self-driving vehicle–

–so that even our road signs can be obscured by the options that we have on the maps in our dashboards, and we are almost ready to offload responsibility for following the map onto the voices that provide directions, images that track our presence on the road, and put us in road maps, to permit replacing the landscapes through which we drive.

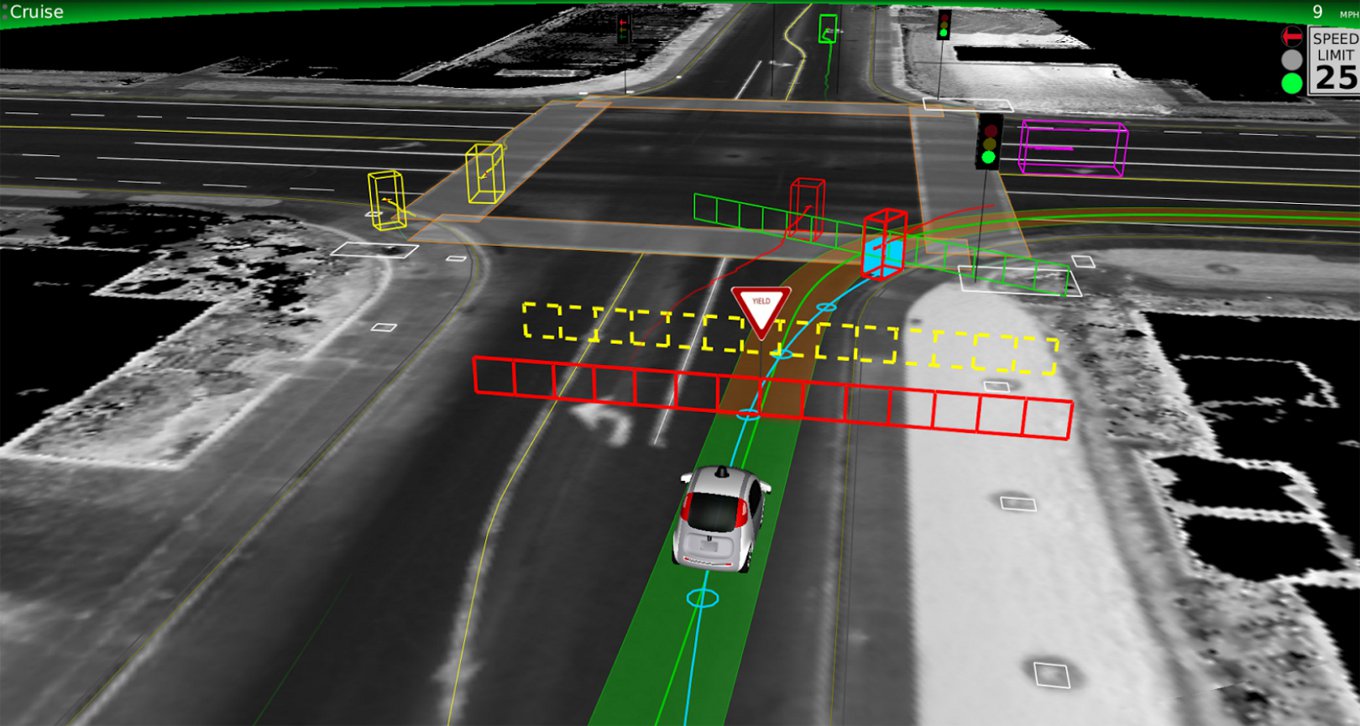

The increasing eventuality of assembling a machine-readable record of each edge of the road. At the same time as roads are scanned, integrated with LIDAR imagery of the environment, and augmented with real-time feedback loops would provide a virtual 1:1 map of automative environments, in which cars could navigate autonomously–within parameters of speed limits, weather conditions, and oncoming traffic.

Yet how intelligent are these “intelligent maps” by which self-driving maps integrate and position themselves in the space networks of roads? As Shannon Mattern has argued, “With the stakes so high, we need to keep asking critical questions about how machines [are able] to conceptualize and operationalize space” that can recognize the human actors in space, and how the increased role of these networks of mapping serve as actants–shifting the networks of on-road behavior, or how, as Mattern puts it, “artificial intelligences, with their digital sensors and deep learning models” that perpetuate one image of space will “intersect with cartographic intelligences and subjectivities beyond the computational ‘Other'” How will such maps, put differently, register people who also occupy the sidewalks, and the other cars on the highway (either as drivers or passengers), and how will they be effected by them?

The lack of a clear roadmap for self-driving cars notwithstanding, the eerily ghostly nature of LiDar views of streets, overpasses, and street side scenery seems to point up the absence of a sensory cartography of place. The maps for self-driving cars are not, it is true, for human subjects, and it is perhaps unfair to prejudge them or their selectivity. But despite the trust we are inclined to accord machines to reading space accurately and comprehensively, by synthesizing a total image of street conditions thatch replace the driver’s tacit sense of road conditions, as if they contain a greater precision than paper maps could hope to contain. We share amazement at the possibilities posed by mechanical sensing is, in a sense, pushed to new limits in the promises of self-driving cars, who have quickly gained multiple evangelists.

We already have cars able to signal their approach of the edges of traffic lanes, altering their human drivers of impending danger. The promises of self-driving cars have generated increasing optimism in the United States and Japan, as the next generation of driving vehicles in a culture ready to embrace the new, perhaps because they promise the very possibility of constant motion in a country of speed. But by removing routes of human motion and how humans move through road systems from direct intelligence, the maps that are being designed for autonomous vehicles to navigate the roadways of America and beyond suggest a new nature of space, as much as of transportation or transit: and the maps for self-driving cars, while not designed for human readers, suggest a scary landscape rarely open to surprises and eerily empty of any sign of human habitation.

And what will even guarantee that the self-driving cars will not go off the roads? The absence of human intelligence from the maps for self-driving cars creates a code-space that seems to depend on its interaction with human intelligence far more than its maps seem to register at first sight. The simulated scenarios that have been created for such self-driving cars by engineers seem to seek to “provide a view of the world that a driver alone cannot access, seeing in every direction simultaneously, and on wavelengths that go far beyond the human senses,” but by nature depend on the ability to translate real-time scenarios in HD maps–as well as topological models–into the car’s actual course.

For in promising to synthesize, compress and make available amazing amounts of spatial information and data sufficient to process the rapid increase of roadways that increasingly clog much of the inhabited world, they are maps for the age of the anthropocene, when ever-increasing spaces are being paved. And although even after the arrival of promising “autonomous vehicles” from Tesla, which has introduced a new Autopilot feature able to maneuver in well-marked highways, and tests for urban driving by Uber, General Motors, and of course Google, the limited safety of relying only on sensors to navigate space in many areas, where vehicles are forced to integrate LIDAR, mid- and low-range radar, camera-based sensors, and road maps of real-time situations, and have difficulty calibrating road conditions and weather with the efficiency human drivers do. The absence of a clear road map for their integration, however, is paralleled by the inability to synthesize contingent information in maps, which in their absence of selectivity offer oddly hyper-rich levels of information.

The notion of processing such comprehensive maps was far away when DARPA sent tout a call in 2003 inviting engineers to design self-driving cars that could navigate a one-hundred-and-forty-two-mile-long course in the desert, near Barstow, CA, across the desert to Prima, Nevada, without giving them a sense of its coordinates on a race-course filled with gullies, turns, rocks, switchbacks and obstacles–from train tracks to cacti–hoped to integrate GPS and sensors to create a car able to navigate space in as complete an image of road conditions as was possible. If the rugged nature of these rigged-out vehicles recalled the first-run of a Mad Max film in their outsized nature paramilitary nature, designed as if to master landscape of any sort, they were so over-fitted with machinery were they with what seemed futuristic sensors that were tantamount to signage–

–to seem to wrestle with the fundamental problem of mastering spatial information that the new generation of autonomous vehicles have placed front and center.

The top-down attempt of DARPA to stage a race of autonomous vehicles, was intended to keep soldiers out of harm’s way in a military context. But the attempt to generate a new sort of military vehicles raised compelling questions of integrating a range of spatial signs in their apparatus of machine vision, laser range-finding data, and satellite imagery, but suffered from an inability to take in environmental information–no cars completed the course, as it was staged, and the vehicle traveling the furthest went only seven and a half miles. Even in a course that was located in the desert–still the preferred site, given the lack of weather conditions and better kept up road surfaces, to test most self-driving cars to minimize unpredicted external influence–the relation of car to world was less easily negotiated than many thought.

While the results of the DARPA grand challenge wasn’t immediately successful, although the basis it set for future collaboration between machine-learning and automotive companies in notions of remote sensing. It placed front and center the problem remains of how to establish more than a one-dimensional picture of the road ahead of the car to navigate the road ahead most easily. And by 2007, the Urban Challenge, invited autonomous vehicles to navigate streets of an urban environment in Victorville, Calif., against moving traffic and obstacles and following traffic regulations, in ways that lifted a corner on the mappability of the future of driverless cars, as if to throw pasta against th ewall in the hopes tht some of it would stick. Although the new starting point of self-driving cars on a network of readable roads, equipped with recognizable signage, remains the most profitable area for development, the machine-readable road maps eerily naturalize the parameters of the roads in their content, and absent humans from their surface. Despite the recourse to satellite photography and attempts to benefit from aerial views, the notion of a map for the autonomous vehicle was barely conceived. But in the almost fifteen years since, the maps that are being developed for self-driving cars have grown into an industry of their own, promising to orient cars to machine-readable records of the roadways in real-time.

Continue reading