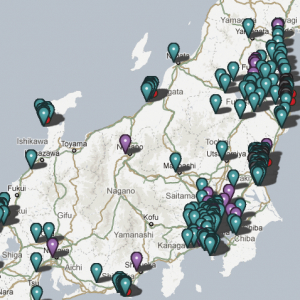

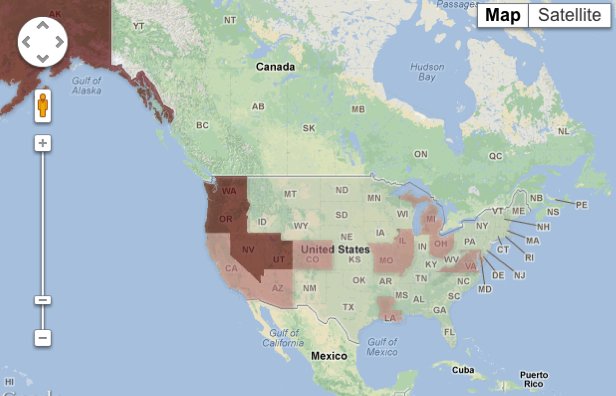

Few maps rely entirely on self-reported measurements today: the data-rich basis of maps make poor controls on data an early modern throwback. But the ability to transmit datasets to the internet from local devices has changed all that. The recent proliferation of radioactivity maps are based on the open sourcing of self-reported measurements to form a new picture, placing information taken with Geiger counters into a framework analogous to a template borrowed from Google Maps. Although the only instrument to register radiation’s presence is a Geiger counter, and no standards have been developed for representing the rises in radiation counts in different regions–or indeed the limits of danger to personal health–the provision of such a map is crucial to disseminating any information about a potential future disaster. Even if the recent earthquakes continue to cause fears of tsunami encouraged comical spectacles of geographic and oceanographic ignorance and DIY cartography to make one wish that everyone didn’t have a handheld device–

July 31, 2025

–the benefits of crowd-sourced maps by more savvy folks overseas who are able to register the dangers of nuclear fallout and more practiced with the catastrophic consequences of tsunami have encouraged a public safety vigilante cartography that we would do well to encourage and retain as a model as we face worldwide environmental dangers and natural disaster catastrophes stand to pose disruptions to the energy industry. While the frames of cel phones may be a cause for geographic ignorance among those who forget the world is a curved sphere, or a pseudo-sphere, the value of reporting local conditions, and geocoded observations to alert folks to the consequences catastrophic conditions should stand as a model practice of citizen science of continued value.

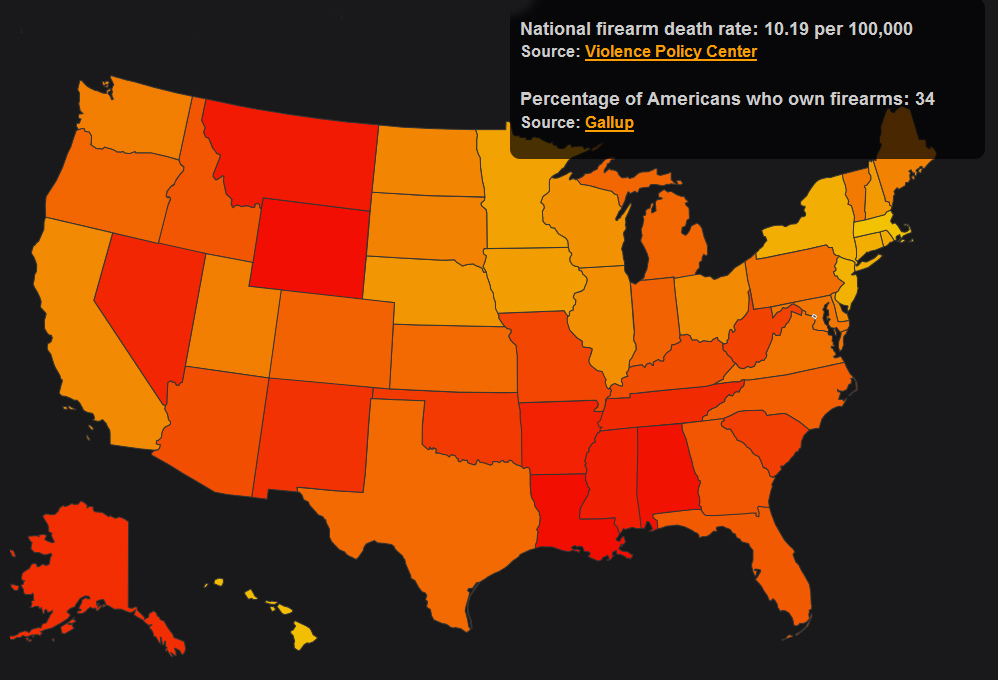

While the three reactor that released radiation in the Fukushima meltdown created the largest release of radiation into the atmosphere, the mapping of this release of 300 tons of radioactive waste reported to be spewing from the reactors as they cooled into the Pacific Ocean may have slipped off the radar of daily news, but on the internet may have become the greatest environmental disaster we’ve encountered, increasing the demand and need to map it, and raising questions of its relation to the massive die-offs of Pacific starfish, herring,

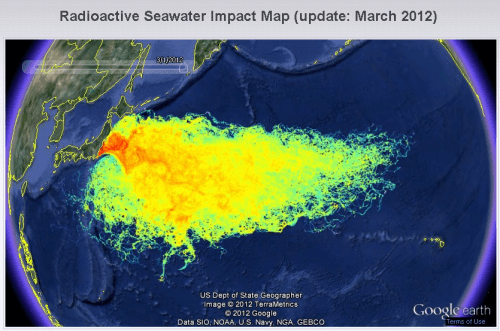

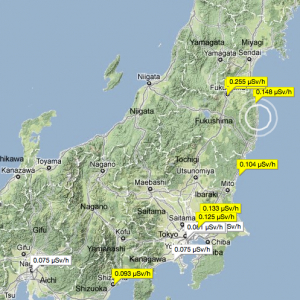

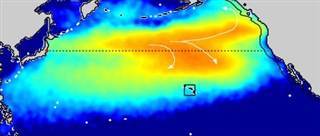

By using the internet to upload and broadcast shifting radiation levels, the flexibility of maps of radiation levels gain a new flexibility and readability through the platform of Google Maps can instantaneously register ambient radiation in air, earth, water, or rainfall, as well as the radioactivity of food, in striking visualizations of geographic space. This came to a head in the maps that were made to respond to the threats of the Fukushima Daiichi nuclear disaster that occurred on March 2011, and the spread of radiation from the explosion across the Pacific that remind us of how wind and the medium of ocean waters distributed plumes of radioactive waste over time, as radioactive materials from the meltdown of three reactors were falsely imagined to have spread across most all of the Pacific rapidly–

Hoax Projection of radioactive plume from Fusushima Daichi Plant

–and even extending into parts of the Atlantic ocean, in ways that generated considerable panic as a density of radioactive waste moved toward the bucolic seas of Hawai’i–as if to create a sense of the terror of the contamination of the natural setting by radioactive plumes.

There was a sense that the natural disaster could be recorded in real-time, reflecting the extent to which Google Maps had changed our expectations for the mappability of global phenomena, and the visibility of fears of global contamination that could be registered and recorded as a real-time apocalypse as everyone could be their own prophet of the end of times on the platform that it allowed.

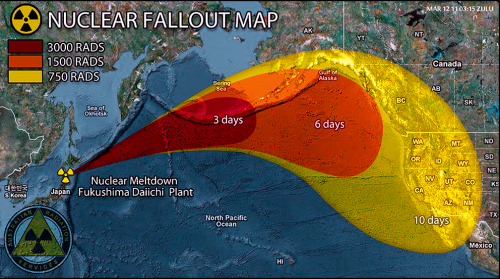

The news ecology itself seem to have shifted, indeed, as what was undeniably an environmental disaster with potential global import if not an event with potentially massive environmental consequences was played down in the mainstream media and by science reporting agencies, in ways that led alternative media to promulgate all the more alarmist narratives of radioactive fish off the United States, die-offs of seafood, and radiations on beaches in California and Oregon and the image that the pristine seawaters of Hawai’i had become a sort of epicenter where all radioactive waste had accumulated and come to rest, as if to confirm the extent of technological disaster.

The maps suggested a sense of atmospheric proximity revealed in radioactive plumes, to be sure, that generated multiple fake maps, designed as forms of fear-mongering to accentuate the proximity of radiation in the environment, using an undated map with the NOAA seal to suggest the spread of something from Japan–and folks assumed it was radioactive, given the climate online–although it was in fact a map measuring effects of a March 11 2011 tsunami, provoked by the Tohoku earthquake on wave height and he communication of wave-energy across the Pacific–perhaps more of interest to surfers than those fearing fallout.

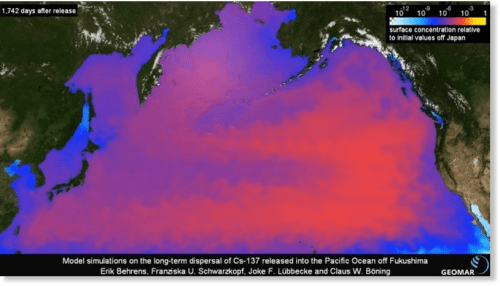

For the explosion created huge challenges for mapping a sense of global radiological risk, far transcending any place or the site of its explosion: the greatest levels of radiation were far removed from the site of the disaster, at the same time as the contamination on the ground, where radioactive deposits were far more intense in relation to geographical proximity. Despite the far broader time-lapse required for the radioactive plume to travel by ocean currents across the Pacific–here shown after two and a half years–based on water samples taken in 2013, which, if far lower than limits in drinking water at 1 Becquerels/cubic meter, were projected to peak to 5 in 2015-16–far less than you might eat in a banana, or experience in a dental x-ray.

—

Discontinuities trumped continuities, however, in the levels of Cesium 134–the isotope that was the fingerprint of the Dai-ichi explosion–confirmed the extent of the diffusion of radioactive isotopes linked to the Fukushima reactor, by 2015, contaminated not only Canadian salmon, as tracked at the University of Victoria, but spread across much of the Pacific ocean, leaving an incredible intensity of the fingerprint isotope linked to Fukushima Dai-ichi–Cesium-134–in offshore waters, which perhaps recirculated in the Alaskan gyre, and the radioactive plume was projected to reach American shores some 1,742 days after it was released.

If still detectable in shellfish sampling as well as salmon, in 2015, the dispersion of radiation made a delayed landfall to the Pacific coast in late 2016, much as the arrival of isotopes across the Pacific was recorded–raising questions of the travel by water of Cesium across the Pacific.

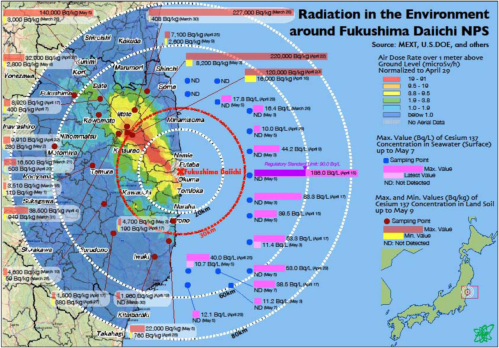

The air dosages of radiation immediately around Fukushima Daiichi suggest a dangerous level of radiation on the mainland, however, apparently confirmed in the growth of thyroid cancer, especially in children, birth defects, and the retention of Cesium 134 in power station workers who show an incidence of leukemia–

and a rise in thyroid cancer in California that follow no distinct geographical pattern–but may be due to pesticides, agricultural contamination, or other waste.

An assembly of multiple static and dynamic maps might assemble an otherwise ‘hidden map’ of local levels of radiation, however, and to reveal or expose otherwise hidden local dangers populations face from radiation leaks. The notion of a shared database in cases of eventual emergency that can be regularly updated online suggested a way of monitoring and reacting to panic levels of the dispersion of radiation from the nuclear explosion, and indeed to measure the unclear relation between proximity to a blast and the intensity of remaining radiation and radioactive dangers.

Although the measurements of danger are debated by some, mapping radiation levels provides a crucial means to confront meltdowns, the breaching of chambers’ walls, or leaks, and to define limits of danger in different regions. Interestingly, the map stands in inverse relation to the usual mapping of human inhabitation: rath er than map sites of habitation or note, it tracks or measures an invisible site of danger as it travels under varied environmental influences in ways hard to predict or track. Although the notion of what such a disaster would be like to map has been hypothetical–and is, to an extent, in datasets like the National Radiation Map, which use the Google Earth platform or available GIS templates to diffuse information not easily accessible. This is a huge improvement over the poor state of information at the time of the threatened rupture of the containment structure of the Three Mile Island in Harrisburg PA in 1978, when no sources had a clear of what radius to evacuate residents around the plant, or how to best serve health risks: if a radius of 10 miles was chosen in 1978, the Chernobyl disaster required a radius of over the double. The clean up of the plant went on from 1980 to 1993; within a 10 mile radius, high radiation levels continued in Harrisburg today.

The larger zones that were closed around the more serious and tragic Chernobyl Nuclear Power Plant that in fact exploded in April 1986 led to a clear Zone of alienation that was evacuated three days after the explosion, and considerable fear of the diffusion of radioactive clouds born in the environment to Europe and North America. The irregular boundary of immediate contamination, including pockets of radiation hotspots not only in Belarus, but in Russia and the Ukraine suggest limited knowledge of the vectors of contamination, and imprecise measurements.

This raised a pressing question: how to render what resists registration or simple representation–and even consensus–on a map? And is this in any way commensurate with the sorts of risks that maps might actually try to measure?

The tragic occurrence of the 2011 Fukushima meltdown raised similar questions, but converged with a new basis to define an internet-based map of the region. If the incident provided a case-in-point instance of ready demand for maps, the availability of a large number of online access in the region led to a considerable improvisation with the value of a crowd-sourced map not defined the local government or nuclear authorities, but by the inhabitants of the region who demanded such a map. The accident that resulted from the tsunami no doubt contributed to a resurgence and perfecting of the crowd-sourced map both in the United States and, in a more flexible way, Japan, as websites try to refine the informative nature carried in radiation maps to create an informative response by open-access maps that can quickly register the consequences of nuclear disaster–or indeed detect such a leak or structural compromise–in the age of the internet, and offer a reassuring image (or a cautionary image) adequate to meet with the invisible and intangible diffusion of radiation in the local or regional environment.

Demand for such online databases reveal and feed upon deeper fears of an official failure to share such data. Indeed, the drive to create a map of some authority has dramatically grown in the light of recent radiation disasters that have not been mapped earlier, in part because of liability issues and because of fears that government protection of the nuclear industry has compromised their own responsibility. If the growth of online sites is a sensible and effective use of data-sourcing on an open platform created by internet providers, it is also one no doubt fed by a paranoid streak in the American character stoked most heavily these days by folks on the Right. I’ve decided to look at two examples of these maps below, both to reflect on the nature of a crowd-sourced map and to suggest the plusses and minuses of their use of a GIS framework to visualize data.

The emphasis on the map as a shared database and resource to monitor and publicize the sensitive information about radiation levels has unsurprisingly increased by the recent threat of contaminated waters that breached the containing walls during the meltdown of the Daichi-Fukushima reactor in March 2011, and also of the difficulties that providing a reliable map of radiation creates: although reactors are licensed by governments and monitored by government agencies, debates about the public dangers that reactors pose concern both the danger levels of radiation and the ability to collect exact data about their spatial distribution, and communication through waters, air, and other environmental vectors. The ability to upload such measurements directly to data-sharing platforms provides a new access for the relatively low-cost creation of maps that can be shared online among a large group of people in regularly updated formats. Given the low-cost of accumulating a large data-set, Safecast concentrated on devising a variety of models to visualize distributions along roads or by interpolating variations in existing maps.

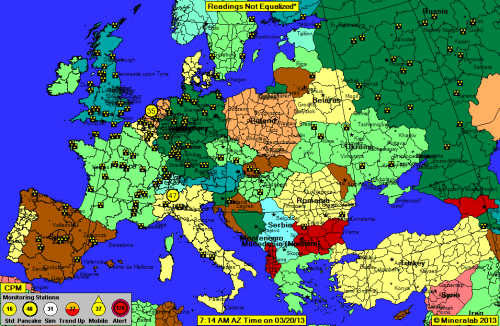

The group-sourced website showing regional and local fluctuations are not visually or cartographically inventive, but pose questions about using data feeds to reveal a hidden topography, as it were, of radiation across the country or landscape–as if to remedy the absence of an open-access trustworthy source of this information local governments would sponsor or collate. Against a field that notes the sites of reactors by standard hazard-signs that designate active reactors, viewers can consult fluctuating readings in circled arabic numbers to compare the relative intensity measured at each reporting monitor station. While rudimentary, and without adjustments or standardized measurements, this is an idea with legs: the Safecast Project proposes to take mapping radiation in the environment along a crowd-sourced model–an example of either a pluralization of radical cartography or a radical cartography that has morphed into a crowd-sourced or “vigilante” form of mapping radiation levels.

Safecast wants to create a “global sensor network” with the end of “collecting and sharing radiation measurements to empower people with data about their environments.” Its implicit if unspoken message of “Cartography to the People!” echoes a strain in American skepticism, if not paranoia, about information access, and fear of potential radioactive leaks–in a counter-mapping of USGS topographic surveys, the movement to generate such composite maps on the internet is both an exciting dimension of crowd-sourced cartographical information, and a potentially destabilizing moment of the authority of the map, or a subversion of its authority as an image produced by a single state.

The interesting balance between authority and cartography is in a sense built into the crowd-sourced model that is implied the “global sensor network” that Safecast corporation wants to construct: while not readily available in maps on access to government-sponsored sites, those interested in obtaining a cartographical record of daily shifting relative radioactive danger can take things into their own hands with a handy App.

The specific “National Radiation Map” at RadiationNetwork.com aims at “depicting environmental radiation levels across the USA, updated in real-time every minute.” They boast: “This is the first web site where the average citizen (or anyone in the world) can see what radiation levels are anywhere in the USA at any time.” As impressive are the numbers of reactors that dot the countryside, many concentrated on the US-Canadian border by the Great Lakes, as in Tennessee or by Lake Michigan. Although a credible alert level is 100, it’s nice to think that each circle represents some guy with a Geiger counter, looking out for the greater good of his country. The attraction of this DIY cartography of inserting measurements that are absent from your everyday Google Map or from the Weather Channel is clear: self-reporting gives a picture of the true lay of the radioactive land, one could say. This is a Jeffersonian individual responsibility of the citizen in the age of uploading one’s own GPS-determined measurements; rather than depending on surveying instruments, however, readings from one’s own counters are uploaded to the ether from coordinates that are geotagged for public consumption.

Of course, there’s little level of standard measurements here, as these are all self-reported based on different models and designs–they list the fifteen acceptable models on the site–in order to broadcast their own data-measurements or “raw radiation counts,” which makes the map of limited scientific reliability and few controls. So while the literally home-made nature of the map has elements of a paranoid conspiracy–as most any map of nuclear reactors across the country would seem to–the juxtaposition of trefoil radiation hazard signs against the bucolic green backdrop oddly renders it charmingly neutral at the same time: the reactors are less the point of the map than the radiations levels around them.

But the subject that is mapped is anything but reassuring. When we focus on one region, the density of self-reported sites gains a finer grain in the Northeast, we can see the concentration of hazard signs noting reactors clustering around larger inhabited areas, oddly, like the ring around New Jersey, just removed from New York, the nuclear reactors in the triangle of Tennessee and Virginia, or those outside of Chicago and in Iowa, and one sees a somewhat high reading near Harrisburg PA. But it’s reassuring that a substantial number of folks were using their Geiger counters at that moment, and inputting data into this potentially useful but probably also potentially paranoid site. I hope they do interview them beforehand, given the very divergent readings at some awfully proximate sites.

If we go to a similarly dense network on the West Coast, the folks at Mineralab offer a similar broad spread among those informants, and the odd location of so many reactors alongside rivers–no doubt using their waters for cooling, but posing potential risks of downriver contamination at the same time.

Although the view of Southern California is perhaps still more scary, and reminds us that the maps have not taken time to denote centers of population:

And there’s a charming globalism to this project. Things aren’t particularly worse off in the USA in terms of the reliance on reactors, if we go to Europe, where reporters are similarly standing vigilant with Geiger counters at the ready given the density of those familiar trefoil hazard signs in the local landscape:

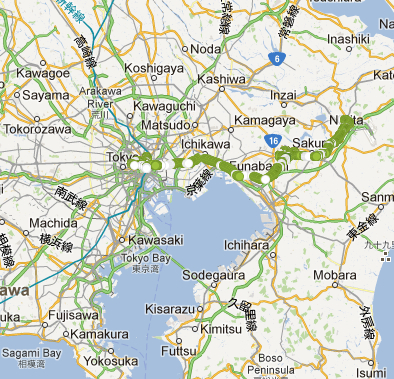

The truly scary aspect of that map is the sheer distribution of reactors, no doubt, whose hazard signs that dot the countryside like scary windmills or danger signs. And, just to put in perspective the recent tsunami that leaked radioactive waste and waters from the Fukushima reactor whose walls it breached, sending material waste and leaching radioactive waters to California’s shores, consider Japan. An impressive range of reactors dot the countryside, and but one vigilant reporter in Sapporo notes the very low levels of radiation that reach his counter:

Withe a week after the March 11, 2011 earthquake hit Japan, the greatest to ever hit Japan, Safecast was born as a volunteer group dedicated to open-platform radiation monitoring in the country and worldwide; in addition to over 15880 dead in the Tsunami and quake, the tsunami caused level 7 meltdowns at three reactors in the Fukushima Daiichi Nuclear Power Plant complex, necessitating evacuating hundreds of thousands of residents, as at least three nuclear reactors exploded due to hydrogen gas that breached outer containment buildings after cooling system failure. When residents were asked to evacuate who dwelled within a 20 km radius of the Fukushima Daiichi Nuclear Power Plant, the United States government urged American citizens evacuate who lived within a radius up to 80 km (50 mi) of the plant to evacuate. This rose questions about the dispersal of radiation from the plant, and deeper questions arose about the safety of returning within a set zone, or the need to demarcate an no-entry zone around the closed plant.

The rapid measurement of radiation distributions not only gained wide demand but provided as of July 2012, Safecast includes some 3,500,000 data points that collect radiation levels, and provided a new mode of sharing information about dangerous levels of radiation. In ways that capitalize on how the internet allows a massive amount of data to be uploaded from numerous points around the world, Safecast exploits a model of data-sharing on its open platform, offering different models to visualize their relation to each other: Safecast allows the possibility to visualize the maps against a road-map, topographic map, and map of local population distributions, so that they can better understand their relation to the readings that they’ve collated on line.

The process of massing data is what makes Safecast such a pioneer in creating a large range of readings that can promise a more comprehensive picture of radiation distribution than the uneven distributions that isolated readers might allow. The Safecast team hopes and promises to improve upon their readings by designing and promoting a new Geiger counter, and has made available the handy workhorse bGeigie, although the cost of $1000/apiece and the time-consuming nature of their assembly is a major obstacle they’re trying to confront. The smaller and handier Geigier Nano Kit creates a dandy device you can easily carry, affix to your car, and whose measurements are easily uploaded to the Safecast website:

The DIY glee of presenting the tool to measure radiation levels with one’s own mini-Geiger is part of the excitement with which Safecast promises to provide a new map of Japan’s safely habitable land. The excitement also derives from a belief in the possibility of “empowering” people to measure and compile data about their environments, rather than trust a map that is assembled by “experts” or official sources who have not been that forthcoming with data-measurements by themselves. The above smile also reflects the vertiginous success of Safecast in distributing its bGeigei, and the boast to have amassed an open-sourced database for open access.

This seems the new key to revealing knowledge in the multiple visualizations that Safecast offers for viewers: with the enthusiasm of great marketing, their website announces with some satisfaction: “attach it to your car and drive around collecting geo-tagged radiation data easily uploaded to Safecast via our API upload page.” This suggest a whole other idea of a road trip, or even of a vacation, in the multiple ‘road-maps’ that volunteers have uploaded for approval on the Safecast site, with over 10,000 data points deriving from bGeigei imports, that Safecast can readily convert to a map:

This is also quite serious stuff, taking crowd-sourced cartography to a new degree: with some 4,000,000 radiation points detected by the Safecast team, the website is able to assemble a comprehensive map of relatively uniform readings, complimenting the sites of radioactivity assembled and culled by the Japanese government with their own independent data from an impressive range of aggregate feeds of environmental data from several NGOs and individual observers across Japan’s coast:

The image of such aggregate data feeds allowed Yahoo! Japan to build their own map displaying the static sensor data of Safecast:

Kailin Kozhuharov has created a detailed map to visualize the distribution of radiation levels in the island through the Safecast database:

The coverage is truly impressive, and multiplication of data points technically unlimited and potentially comprehensive. While divergent readings may be entered every so often as a Geiger counter wears down or malfunctions, controls are built into the system. An example of the coverage in Japan, again the focus of mapping radioactivity in the wake of the recent Fukushima disaster, where Safecast is based, using locally obtained data once again:

The widespread appeal of this device, even more than the Radiation Network, reveals the widespread nature of a belief or suspicion–no doubt with some grounds or justification–that a true map of the dangers or levels of radiation is in fact never already provided or available to citizens, and that the failure of governments of communicating an accurate mapping of radiation demands a privatized response. And with its partnership with Keio University, developed after Fukushima, Safeguard has developed the “Scanning the Earth” (STE) project that maps the historical data of radiation readings across the globe. With the Fukushima prefecture, Safecast has also issued a comprehensive global mapping of the dispersal of high levels of radioaction from Fukushima from its own massive database to chart the impact of the environmental disaster over time:

Although this map reflect the ties to the MIT Media Lab, it is informed by a dramatically new local awareness of the importance to create a map flexible enough to incorporated locally uploaded data measurements for open access. It is also a great example of how an event can create, provoke, or help to generate a new sense of how maps can process the relation of local phenomenon to the global in a variety of readily viewable formats. The demand for creating this world map clearly proceeded from the local event of the 2011 Tsunami, Safecast was at a position to observe the importance of maintaining an open-sourced database (now including some 2,500,000 readings) that offer an unprecedented basis for developing a platform of data-sharing that is readily available online. In working with the same databases, they also offer some cool visualizations of the data that they collect to illustrate differentials radiation levels in readable ways linked to potential dangers to individual health:

The new facility that the internet has created in the ability to upload, share, and compile information from diverse and multiple sites without considerable costs has meant so lowered the cost of collaboration that it can occur without any reference or dependence on a central governmental authority. This has allowed the compilation of an immense amount of simultaneous data to be regularly uploaded and stored with almost no extra cost from a group of volunteers and to be available in transparent ways on an open-access platform. (Late in updating this post, I came across an earlier PBS NewsHour episode on Safecast’s interest in data-collection in the wake of the disaster, and the demand of local residents in Japan for further data, given the silence of official government sources on the disaster and its dangers:

(The episode offers great data on using Geiger counters to detect radiation levels at multiple sites near the exclusion zone that rings the reactor, including a restaurant parking lot.)

The means for offering locally contributions to a world map of radiation level distributions reveal an expanded ability to share information in a map the relation of place to environmental disasters. Indeed, the map itself foregrounds new graphical forms of information-sharing. There are clear problems with the Safecast model that Japan, in fact, is likely to be an exception to: Japan was a place providing access to large numbers of its population already in 2003, offering free wi-fi in trains, airports, and cafés or tea houses. In comparison, the far more limited numbers of the population have access to wi-fi or online resources in rural American towns, or even in urban areas, would make access questions less possible in the United States, where a similar movement has failed to expand not only because of the lack of a disaster of similar proportions. There is the danger that the “freedom of information” they champion is in the end not as openly accessible as one would wish: if roughly one quarter of hotspots worldwide are in the United States, it shared with China and Italy the lowest number of hotspots per person, at lower than 3 per person as of 2007, while Japan had nearly 30 million 3G connections. This creates a significant obstacle to the expansion of the internet as a universal access service outside urban areas with municipal wireless networks, despite the significant plans to expand internet access on interstates. Despite plans to expand free service zones in Asia, Canada, and parts of the Americas, the broadcasting of regional variations in a natural disaster would be limited.

There may be something oddly scary that Safecast has had its own corporate profile and successful Kickstarter campaign, marking the privatization of the sort of public traditions of cartography formerly undertaken by states for their own populations to devolve to the private sphere. For whereas we have in the past treated cartographical records as an accepted public good, there is limited acceptance of accessible data collection and synthesis. As a result, one seems more dependent on the active participation in one’s construction of a more accurate of radiation levels, or upon a network of vigilant vigilante cartographers who can upload data from wi-fi zones. Is there the risk of a disenfranchisement of a larger population, or is data-sharing the best available option?

An alternative model for mapping radiation might be proposed in the compelling map of the oceanic travel of radiation (probably in contaminated waters, but also in physical debris) that has been suggested by vividly compelling cartographical simulations of the dispersal of the long-term dispersal of Cesium 137 (137CS) from waters surrounding the Fukushima reactor. Although the map is indeed terrifyingly compelling, in relying only on oceanic currents to trace the slow-decaying tracer across the Pacific, the video map seems to arrogate capacities of measuring the dispersal over the next ten years of radioactive material in ocean waters with a degree of empiricism that it does not in fact have. How ethical is that?

For all the beauty of the color-spectrum map of a plume of radiation expanding across ocean waters–and the value of its rhetorical impact of strikingly linking us directly to the reactor’s meltdown–its projected charting of the plume of contaminated waters due to reach the waters of the United States during 2014, if normal currents continue, is far less accurate or communicative than it would seem. To be sure, as the Principal Investigator and oceanographer Vincent Rossi, a post-doctoral researcher at the Institute for Cross-Disciplinary Physics and Complex Systems in Spain, “In 20 years’ time, we could go out, grab measurements everywhere in the Pacific and compare them to our model.” But for now, this expanding miasma offers an eery reminder of the threat of widespread circulation of radioactive materials worldwide.

Indeed, the charts that project the spread of radiation over a period of five years, benefitting from the power of computer simulations to map by tracer images the diffusion of radioactive discharge along clear contour lines in the Atlantic, provide a compelling chart of how we might want to look and measure the levels of radioactivity in our national waters.