Shifting from a vision of blissed-out honeybees buzzing around a flowering birch tree in city gardens to the history of those buildings’ individual bricks, Campbell McGrath conjured a distinctly modern melancholy that imagines New York, reduced to an “archipelago of memory.” Taking poetic license to link buildings of man-made bricks once “barged down the Hudson,” from a hinterland of clay pits, quarries, and factories, to the future history of their disintegratation into the Atlantic, he asks we imagine their eventual return to silt: “It’s all going under, the entire Eastern Seaboard,” the Miami-based poet almost exuberantly prophesied, urging that we welcome the impending ecotonal intersection between land and sea that rising temperatures have wrought. As the pollen born on anthers from blossoms of cherry trees to hexagonal cells of their hives, the transience of buildings is not surprising from a poet who lives in a state where sea-level rise is four times the global rate. The atmospheric stresses of coastal condominiums near Miami is in the news again, as the catastrophic destruction is not apocalyptic in its own way.

In evoking the impending retreat of New York from its shores, McGrath imbued a sense of place with the stoic inevitability of monumental cataclysm. Global warming, sings the Miami-based poet, is an inevitability we must learn to welcome. Yet it is hard to acknowledge the inevitability of collapse on shorelines’ ever-changing ecotone. And it is hard to dislodge coastal California from the imagined vacation spot whose climate we could keep in aspic as a holiday space preserved in yellow photographs of old family albums. If Florida always seemed to stand in for salubrity and exceptionalism of sunny weather–its winter beaches promising fun and a promised rejuvenation for the vacationers and elderly alike as if the peninsula promised perpetual summer breezes and an abundance of sun–

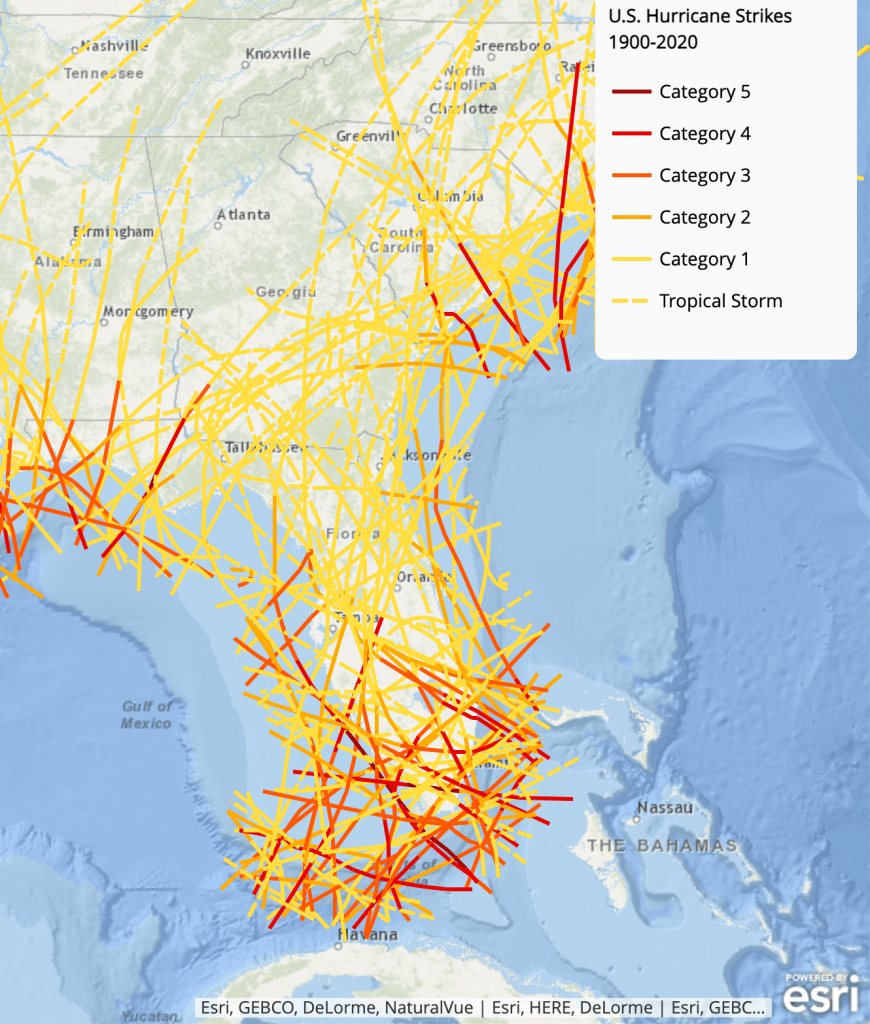

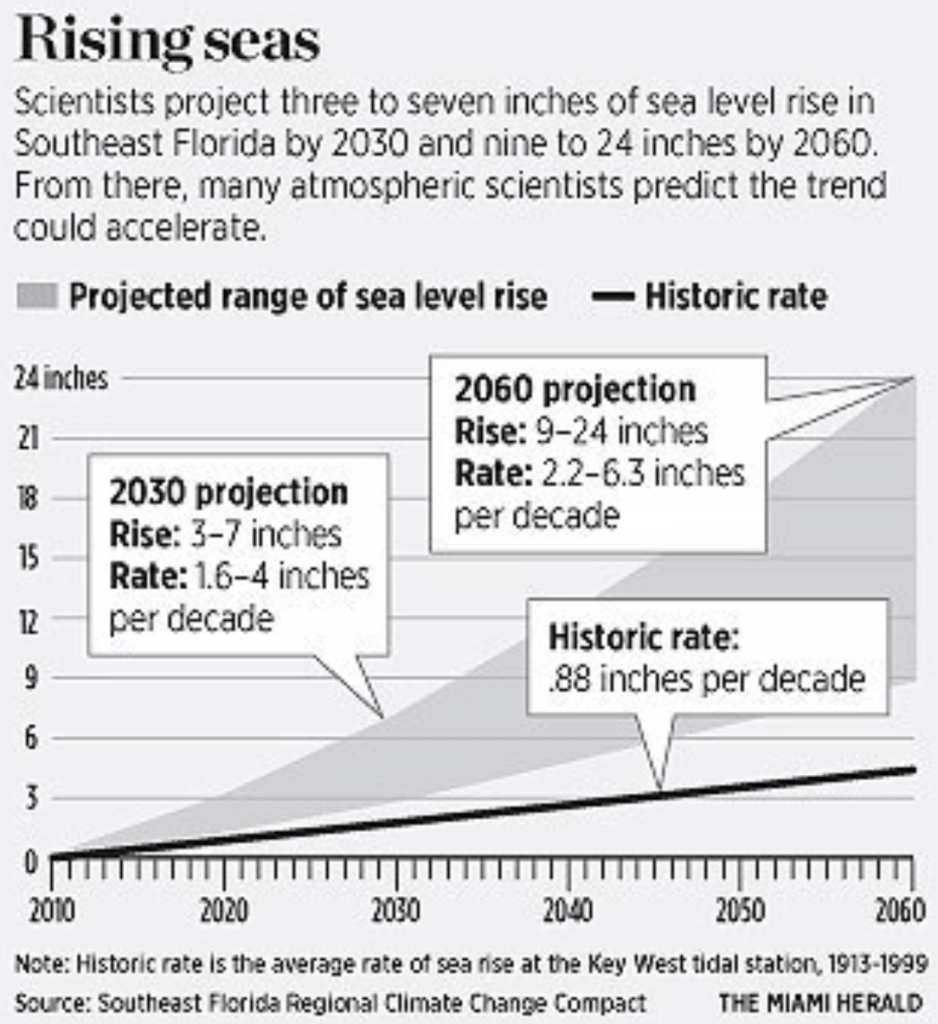

–where coastal waters promised easy access. The mythic geography that encouraged the construction of untold condominiums along the coast now seems a huge miscalculation. For as sand is eroded from those beaches with sea-level rise, we try to come to terms with an ecotonal shoreline that may not be able, with increased coastal flooding, to sustain their increased weight of condominiums’ coastal views. For saltwater permeation of the subsoil and inland ground risks eroding the very structures built to provide access to the sea, in a rush to build towers by the ocean that preceded the increase of saltwater inundation of the “land,” buildings whipped by hurricanes of unprecedented intensity, and storm surges that move ever more inland, the water table of the entire state is being pushed up in ways that will only remind us how much landfill the coast is actually built on.

We may have collectively adopted an attitude of near resignation at climate change and sea-level rise as intangibles by early 2020, after an unremitting sort of denialism of the “fraud” of climate change; McGrath’s poem preceded the terrifying collapse of the Surfside Towers evoked a shifting map of the nation as the Atlantic rose. The disaster grabbed national attention as the collapse of the residential building on the coast near Miami actually collapsed, pancaking in ways that attracted national alarm. And not for no reason. For the whole of the national seaboard seems compelled to move in exactly the same direction McGrath’s poem that sings of the eventuality of climate change. Leaving Washington, DC, the American Capitol secures more solid grounds in Kansas City and leaves the shores of Washington, DC, leaving “flooded tenements” or houseboats that are “moored to bank pillars along Wall Street.” As the Atlantic rises, few “will mourn for Washington,” and the once densely inhabited seaboard is abandoned, the coast reduced, in this dystopic flight of fancy, leaving the nation forced to shift the capitol to western Missouri in a search for more secure grounds.

The sudden collapse of half of the south tower of Champlain Towers in Surfside, FL, may be less apocalyptic in scope than the eastern seaboard. But it is now impossible to speak of offhand: we are agape mourning residents of the collapsed tower, trapped under the concrete rubble after the sudden pancaking of the southern tower. Without presuming to judge or diagnose the actual causes for the tragic sudden collapse of a twelve story condominium along the shore, the shock of the pancaking of floors of an inhabited condominium raises questions on how the many structural questions that surround Champlain Towers were overlooked. We ask in retrospective whether the certification process is adequate for forty year old concrete weight-bearing structures exposed to far more saltiness and saltwater than they were ever planned to encounter, they also raise questions of the increasingly anthropogenic construction of the coast. While the state of Florida long sold itself to the nation as a beach land–a site of people sprawled on towels soaking up the sun–the fluid nature of the coast as an ecotone bridging land and sea in changeable and changing ways suggests nothing less than the collision course between the increasingly fragile edge-land of the coast with the image of beachfront property that assuredly offers its future residents the health of the shoreline and a view of the sea.

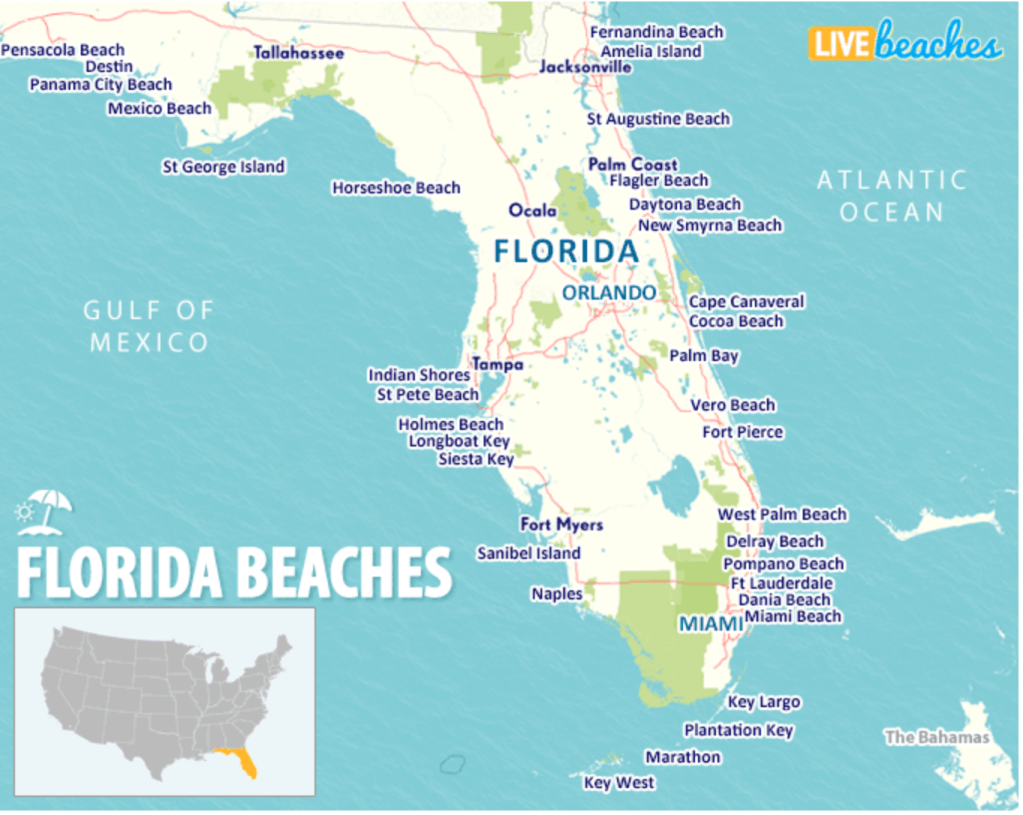

The advertisement in a publication ostensibly dedicated to geographic education offered a map of the state as a map of pleasure, pools, white plaster towers, and folks in bathing suits, picnicking, golfing and playing beachball, as the sun gazed beatifically down on the state’s azure shores: the whole peninsula seemed a beach, or in fact was one, boasting room for all on 1,400 miles of mainland coastline.

Although the realty industry and development business have sold the coastal experience of Florida as access to the shore, that increasingly popular prospect on the tranquil sea, the coast is in fact an ecotone–an intersection of land and sea, and increasingly porous one. We must recognize the coast as an increasingly overbuilt environment, and one poorly mapped as a divide between land and sea. The absence of the shore as a clear line should be more than evident not because of sea-level rise, but the density of shoreline skyscrapers and concrete residences crowding a strip between protected interior wetlands and the shore in southern Florida that is mostly built on former wetlands, but presented as a clear divide between land and see. We have encouraged the construction of a complex of coastal settlement as if it lay on solid ground, in concrete towers that are not impervious to weathering from the ocean air that washes over the shore, as we ignore the coastline’s vulnerability from ocean elements. If the porous nature of land and sea are viewed as problems of the ocean–the adverse effects of agricultural runoff or human waste on ocean currents beset by tidal algal blooms from the late 1970’s–due to agricultural runoff–and apparent in inland lakes, the fragility of the built environments we have made on the shore are not fully mapped for the very consumers sold residences promising ocean views, which are often poorly inspected due to developers’ greed. We have failed to map the coast as an ecotone–or acknowledge the increased permeation of the shore with saltwater, both underground and in the increasingly active weather systems that envelope the shore with saline ocean air, as we imagine the shore to be able to be mapped as a straight line, when it is not.

We continue to map “settlement” and “development” in terms of sold shoreslines, as if they were impermeable and not buffeted themselves. We have long mapped Florida by its beaches, and constructing homes for a market that privileged the elusive and desired promise of a beach view. Despite the allure that the state offers as a sort of mecca of beach settlement, meeting a market by offering vicarious live beach webcams in Florida and refusing, in the 2020 pandemic, to close beaches and beach life that promise an engine of economic activity, or imagine red flags by posing danger signs on the beaches.

Risks are similarly reduced or erased in the practice of coastal development for too long. We long recognized the instability of the shoreline communities, and not only from rising sea-level or surging seas. The lure of the beach continues, denying their actual instability, with the lure of otherworldly qualities as “edges” we imagine ourselves to be exhilarated by, if not released from day-to-day constraints, as a destination promising a new prospect on life.

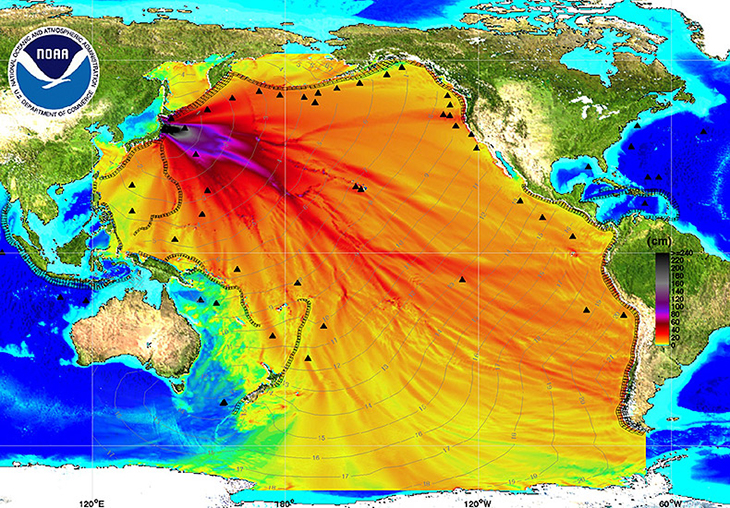

The long distinction of the state by its beaches–its uncertain edges with the ocean–demand to be mapped and acknowledged as less of the clear line between land and sea than not only a permeable boundary, but of a complex geography vulnerable to both above ground flooding and underground saltwater incursion, sustained exposure to salty air, winds of increased velocity, and an increasing instability of its shores that have long been a site attracting increased settlement. Can one view the ocean surrounding the shores not only as a quiescent blue, but as engaged with the redrawing of the line of the shore itself as a divide long seen as a stable edge of land and sea?

From the increased tensions of hurricanes from the warming oceans, to underground saltwater incursion, to a constant beach erosion and remediation, the beaches we map as lines are coastal environment whose challenges engineers who valued the economy and strength of concrete towers did not imagine. The combination of the influx of salty air, the erosion and replacement of beach “sand”, and increased construction of condominium have created an anthropogenic shore that demands to be examined less as a divide between land and sea than a complex ecotone where salt air, eroding sand, karst, and subsoil weaknesses all intersect, in ways that the mitigation strategies privileging seawalls and pumping stations ignore. As importation of sand for Miami’s “beach” continues, have we lost sight of the increasingly ecotonal organization of Florida’s shores?

The point of this post is to ask how we can best map shifts in the increasingly anthropogenic nature of Miami’s shores to come to terms with the tragedy of Champlain Towers, to seek us to remain less quiescent in the face of the apparent rejiggering of coastal conditions as a result of climate change beyond usual metrics of sea-level rise. For the collapse of Champlain Towers provides an occasion for considering how we map these shores, even if the forensic search for the immediate structural weaknesses that allowed the disaster of Champlain Towers to occur.

Miami Beach has the distinction of the the lowest site in a state with the second-lowest mean elevation in the nation, and ground zero of climate change–but the drama of the recent catastrophic implosion of part of Champlain Towers should have become national news as it suggested the possible fragility of regions of building that are no longer clearly defined as on land or sea, but exist in complex ecotones where the codes of concrete and other building materials may well no longer apply–or, forty years ago, were just not planned to encounter. While we have focussed on the collapse of the towers with panic, watching the suddenly ruptured apartments akin to exposed television sets of everyday Americans’ daily lives, the interruption of the sudden collapse of the towers is hard to process but must be situated in the opening of a new landscape of climate change that blurs the boundary between land and sea, and challenges the updating of building codes for all coastal communities. The old building codes by which coastal and other condominiums were built by developers in the 1970s and 1980s hardly anticipated to being buffeted by salty coastal air, or having their foundations exposed to underground seepage or high-velocity rains: the buildings haven’t budged much, despite some sinking, but demand to be mapped in a coastal ecotone, where their structures bear stress of potential erosion, concrete cracking, and an increased instability underground, all bringing increased dangers and vulnerabilities to the anthropogenic coast in an era of extreme climate change.

A small beachside community bordering the Atlantic Ocean just north of Miami Beach, on a sandy peninsula surrounded by Biscayne Bay and the Atlantic, the residential community is crowded with several low-rise residential condominiums. While global warming and sea-level rise are supposed to be gradual, the eleven floors of residential apartments–a very modest skyscraper–that collapsed was immediate and crushing, happening as if without warning in the middle of the night. As we count the corpses of the towers residents crushed by its concrete floors, looking at the cutaway views of eerily recognizable collapsed apartments, we can’t help but imagine the contrast between the industry and care with which bees craft their hives of sturdier wax hexagons against the tragedy of the cracked concrete slab that gave way as the towers collapsed, sending multiple floors underground, in a “progressive collapse” as vertically stacked concrete slabs fell on one another, the pancaking multiplying their collective impact with a force beyond the weight of the three million tons of concrete removed from the site.

This post seeks to question if we have a sense of the agency of building on the shifting shores of Surfside and other regions: even if the building codes for working with concrete have changed –and demand changing, in view of the battering even reinforced concrete takes from hurricanes, marine air, flooding, and coastal erosion and seawater incursion near beachfront properties–we need a better mapping of the relation of man-made structures and climate change, and the new coasts that we are inhabiting in era of coastal change, far beyond sea-level rise.

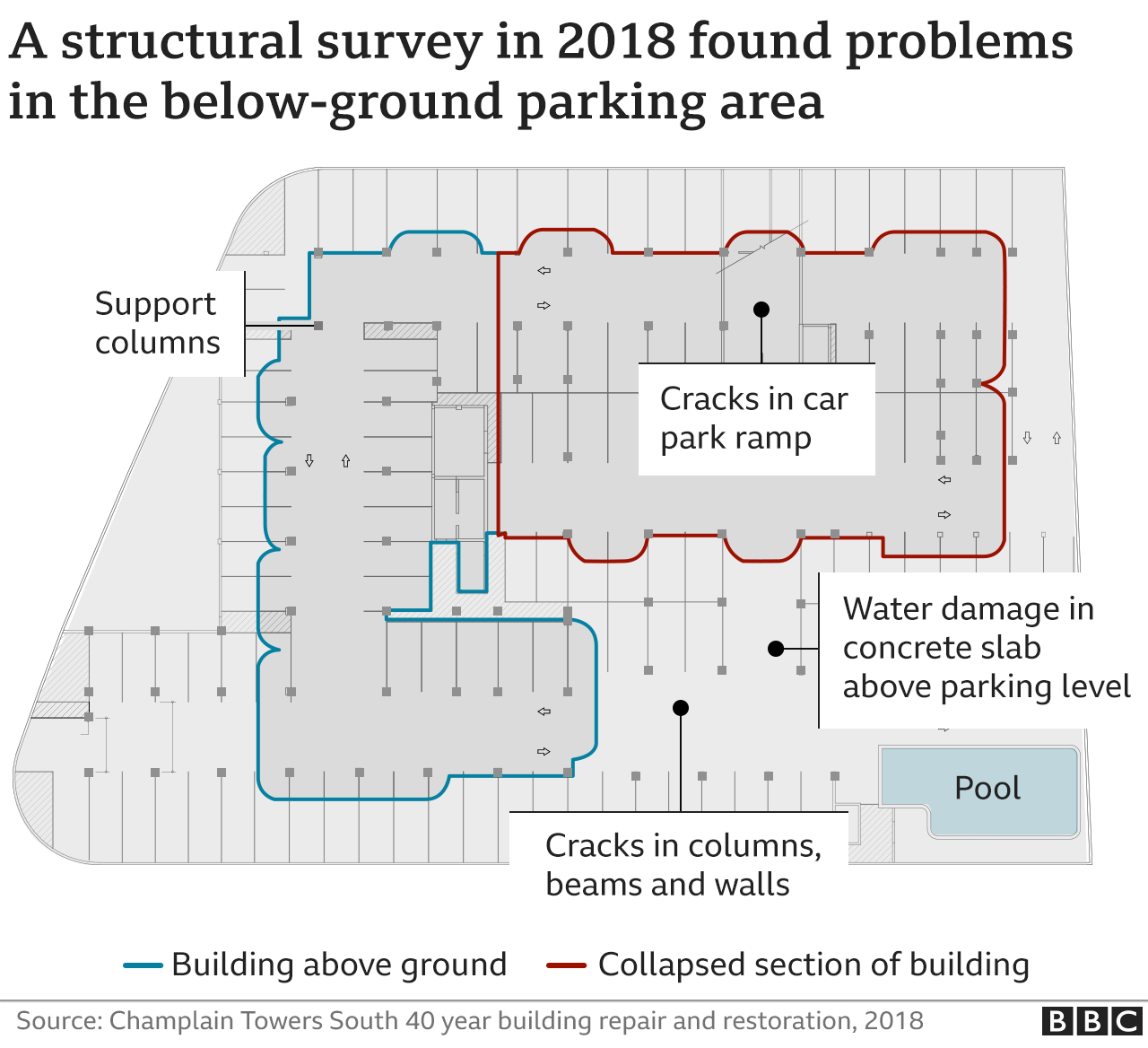

As we hear calls for the evacuation of other forty-year old buildings along the Florida coast, it makes sense to ask what sort of liability and consumer protection exists for homeowners and condominium residents, who seem trapped not only in often improperly constructed structures for an era increasingly vulnerable to climate emergency, but inadequate assurances or guarantees of protection. We count the corpses, without pausing to investigate the dangers of heightened vulnerability of towers trapped in unforeseen dangers building in coastal ecotones. Indeed, with the increased dangers of flooding, both from rains, high tides, storm surges, and rising sea-lelel, the difficulty of relying on gravity for adequate drainage has led to a large investment in pumping systems in the mid-beach and North Miami area. The sudden collapse of the building, which civil engineers have described as a “progressive collapse,” as occurred in lower Manhattan during the destruction of New York’s World Trade Center, the worst fear of an engineer, in which after the apparent cracking of the structural slab of concrete under the towers’ pool, if not other structural damage. The thirteen-story building, located steps from the Atlantic ocean, was part of the spate of condo construction that promised a new way of life in the 1970s, when the forty year-old building was constructed; although we don’t know what contributed to the collapse that was triggered by a structural vulnerability deeper than the spalling and structural deterioration visible on its outside, the distributed liability of the condominium system is clearly unable to cope with whatever deep structural issues led to the south tower’s collapse.

Americans who hold $3 trillion worth of barrier islands and coastal floodplains, according to Gilbert Gaul’s Geography of Risk, expanding investment in beach communities even as they are exposed to increased risk of flooding–risks that may no longer be so easily distributed and managed among condominium residents alone. And the collapse of the forty year old condominium tower in Surfside led to calls for the evacuation and closure of other nearby residences, older oceanfront residences vaunted for their close proximity to “year-round ocean breezes” and sandy beach where residents can kayak, swim, or enjoy clear waterfront. The promise that was extended by the entire condominium industry along the Florida coast expanded in the 1970s as a scheme of development that was based on the health and convenience of living just steps from the Atlantic Ocean, offering residences that have multiplied coastal construction over time. While the tragic collapse suggests not only the limits of the condominium as a promise of collective shouldering of liabilities, it also reminds us in terrifying ways of the increased liabilities of coastal living in an age of overlapping ecotones, where the relation between shore, ocean spray, saltwater incursion, and are increasingly blurred and difficult to manage in an era of climate change–as residences such as the still unchanged splashpage of Champlain Towers South itself promise easy access to inviting waters that beckon the viewer as they gleam, suggested exclusive access to a placid point of arrival for their residents that developers still promise to attract eager customers.

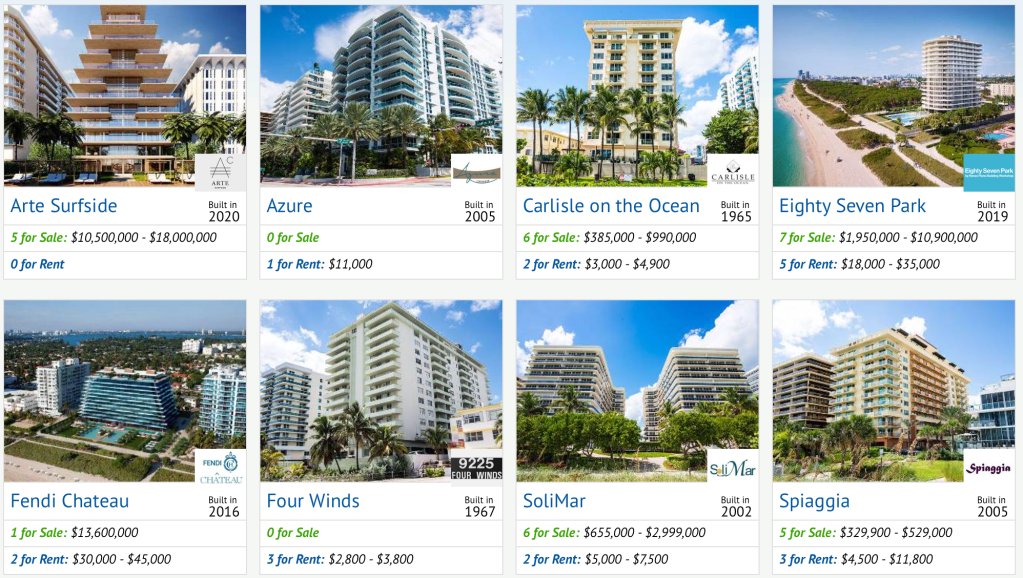

Although the shore was one of the oldest forms of “commons,” the densely built out coastal communities around north Miami, the illusion of the Atlantic meeting the Caribbean on Miami’s coasts offers a hybrid of private beach views and public access points, encouraging the building of footprints whose foundations extend to the shores, promising private views of the beach to which they are directly facing, piles driven into wetlands and often sandy areas that are increasingly subject to saltwater incursion. The range of condominiums on offer that evoke the sea suggest it is a commodity on offer–“surfside,” “azure,” “on the ocean,” “spiaggia“–as if beckoning residents to seize the private settlement of the coasts, in a burgeoning real estate market of building development has continued since the late 1960s, promising a sort of bucolic resettlement that has multiplied coastal housing developments of considerable size and elevated prices. Is the promise to gain a piece of the commons of the ocean that the real estate developers have long promoted no longer sustainable in the face of the dangers of erosion both of the sandy beaches and the concrete towers that are increasingly vulnerable not only to winds, salt air, and underwater inland flow, but the resettlement of sands from increased projects of coastal construction?

If collapse of the low-rise structure that boasted proximity to the beach may change the condo market, the logic of boasting the benefits of “year-round ocean breezes,” has the erosion of the coast and logic of saltwater incursion in a complex ecotone where salty air, slather flooding, and poor drainage may increasingly challenge the stability of the foundations of the expanding market for coastal condos–and to lead us to question the growing liability of coastal living, rather than investing in seawalls and beach emendation in the face of such a sense of impending coastal collapse, as the investment in concrete towers on coastal properties seem revealed as castles in the sand.

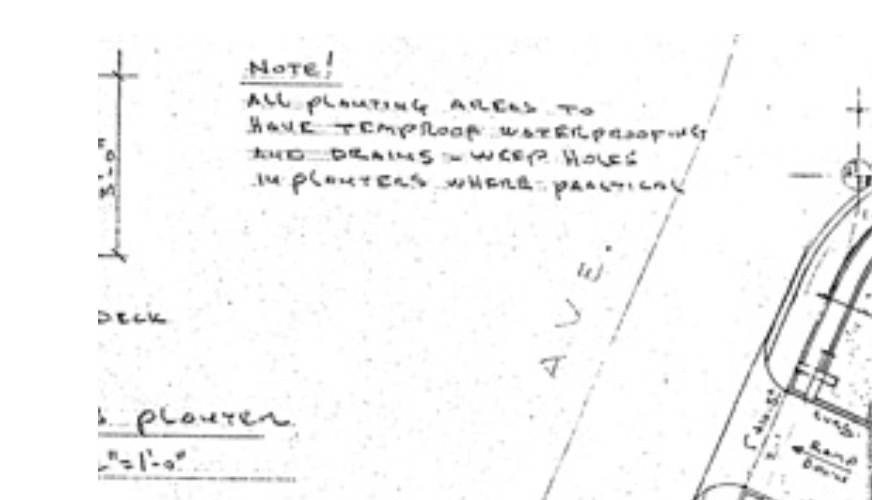

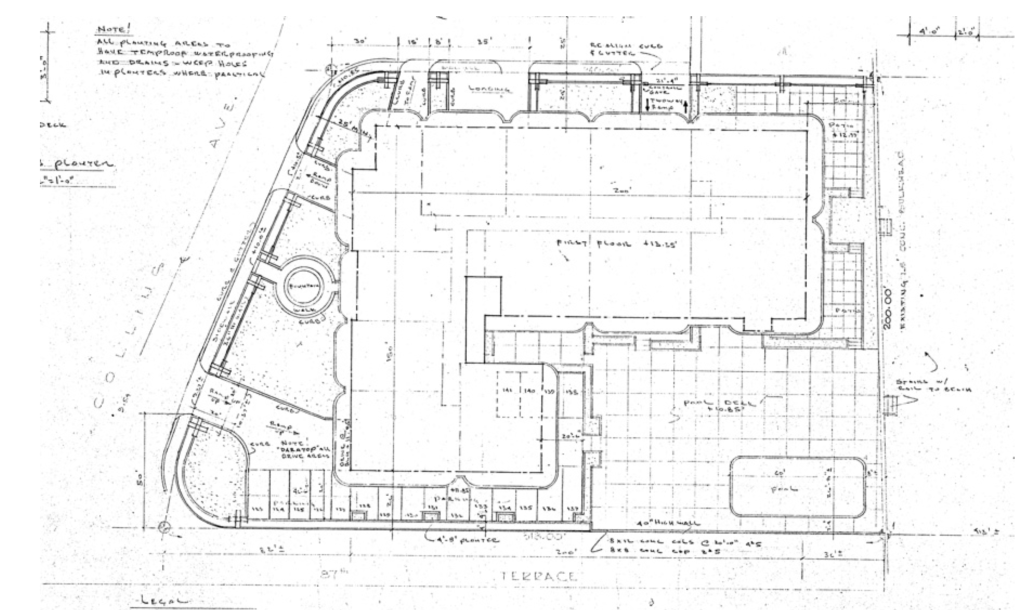

If the architectural plans for the forty-year-old building insured adequate waterproofing of all exposed concrete structures, in an important note in the upper left, the collapse left serious questions about knowledge of the structural vulnerability in the towers, whose abundant cracking had led residents to plan for reinforcement. The danger that Surfside breezes sprayed ocean air increased the absorption of chloride in the concrete over forty years that it cracked, allowing corrosion of the rebar, and greatly weakening the strength of supporting columns that had born loads of the tower’s weight, significantly weakening the reinforced concrete. The towers had been made to the standards of building codes of an earlier era, allowing the possible column failure at the bottom of the towers that engineers have suggested one potential cause for collapse in ways that would have altered their load-bearing capacity–the lack of reinforced concrete at the base, associated with the collapse of other mid-range concrete structures often tied to insufficient support and reinforced concrete structures. The dangers of corrosion of concrete, perhaps compounded by poor waterproofing, of cast in place concrete condominium towers in the 1970s with concrete frames suggest an era of earlier building codes, often of insufficient structuring covering of steel, weaknesses in reinforced concrete one may wonder if the weathering of concrete condominiums could recreate between columns and floors–and potential shearing of columns to the thin flat-plate slabs whose weight they bore, creating a sudden vertical collapse of the interior, with almost no lateral sideways sway.

Even as we struggle to commemorate those who died in the terrifying collapse of a residential building, where almost a hundred and sixty of whose residents seem to be trapped under the collapsed concrete ruins of twelve floors, we do so with intimations of our collective mortality, that seems more than ever rooted in impending climate disasters that cannot be measured by any single criteria or unique cause. The modest condo seems the sort of residence in which we all might have known someone who lived, and its sudden explosive collapse, without any apparent intervention, raises pressing questions of what sort of compensation or protection might possibly exist for the residents of buildings perched on the ocean’s edge. Six floors of apartments seem to have sunk underground in the sands in which they will remain trapped, in sharp contrast to the bucolic views the condominium once boasted.

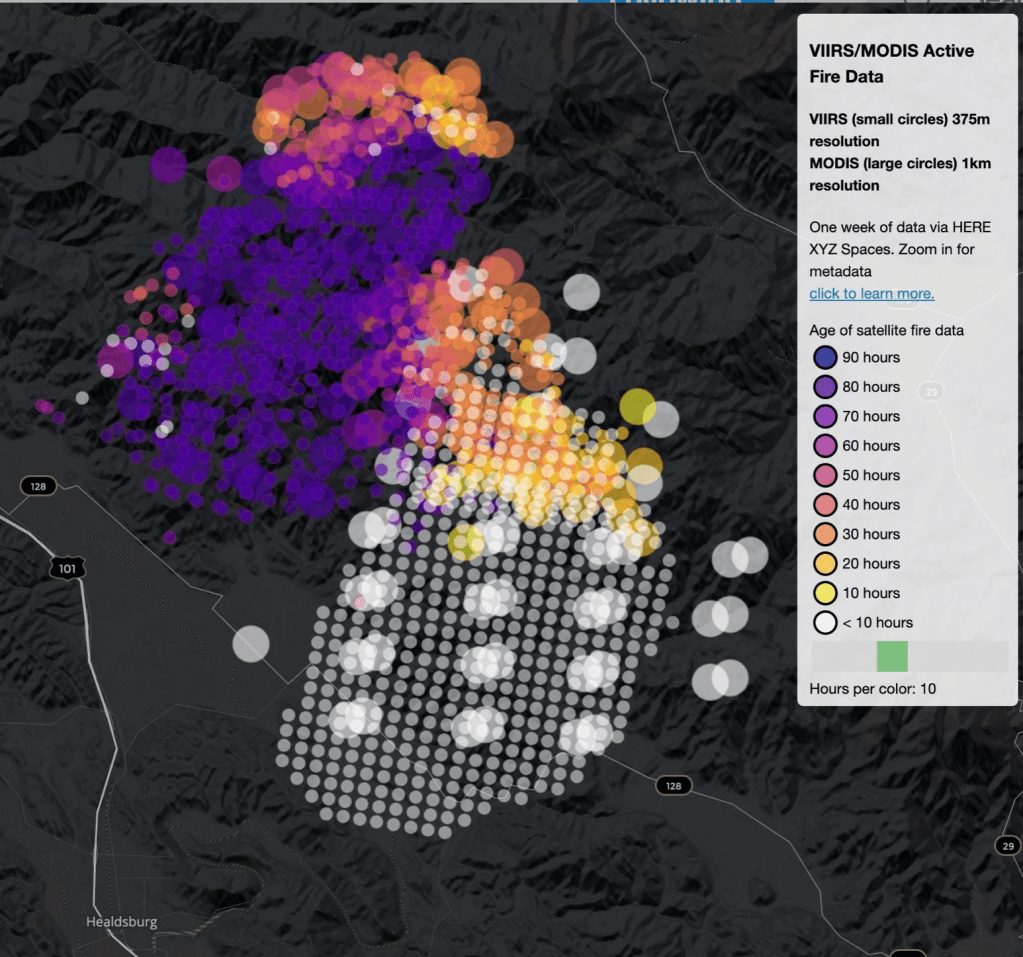

While the apparent seepage in the basement, parking garage, and Champlain South that ricocheted over social media do not seem saltwater that seeped through the sandy ground or limestone, but either rainwater or pool water that failed to drain adequately, the concrete towers that crowd the Miami coastline, many have rightly noted, have increasingly taken a sustained atmospheric beating from overlapping ecotones of increased storms, saltwater spray, and the underground incursion of saltwater. If the causality of the sudden collapse twelve stories of concrete was no doubt multiple, the vulnerability to atmospheric change increased the aging of the forty year old structure and accelerated the problems of corrosion that demand to be mapped as a coastal watershed.

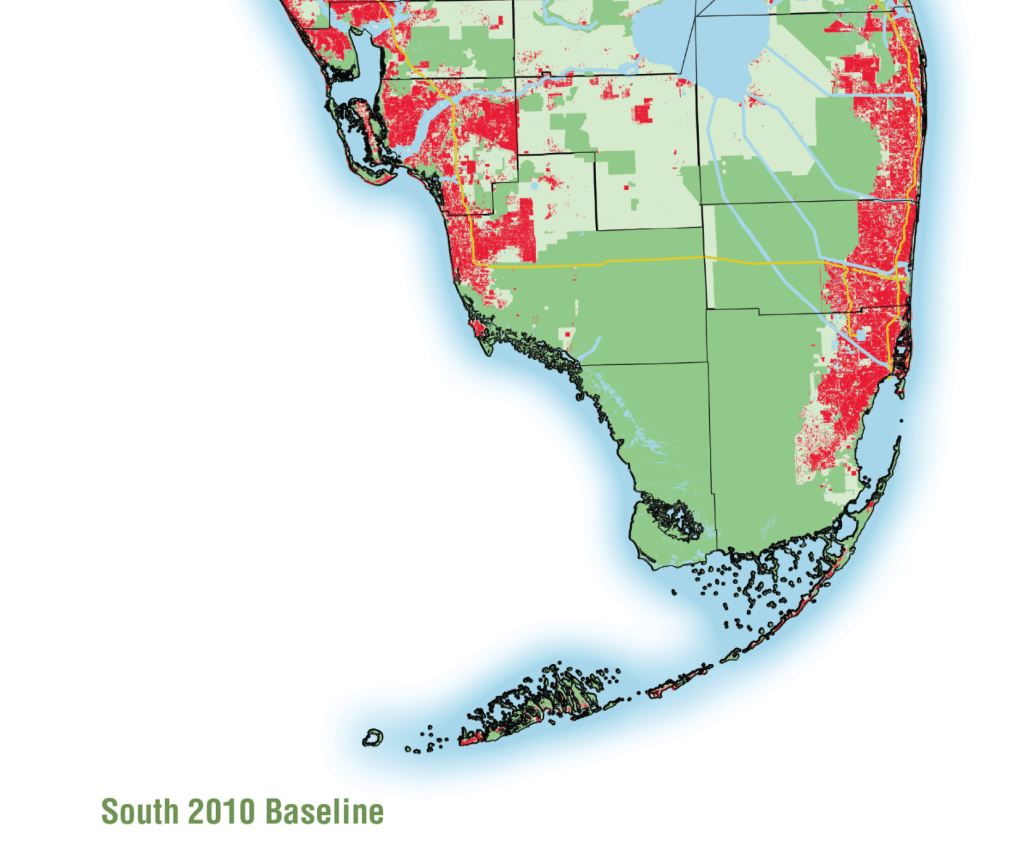

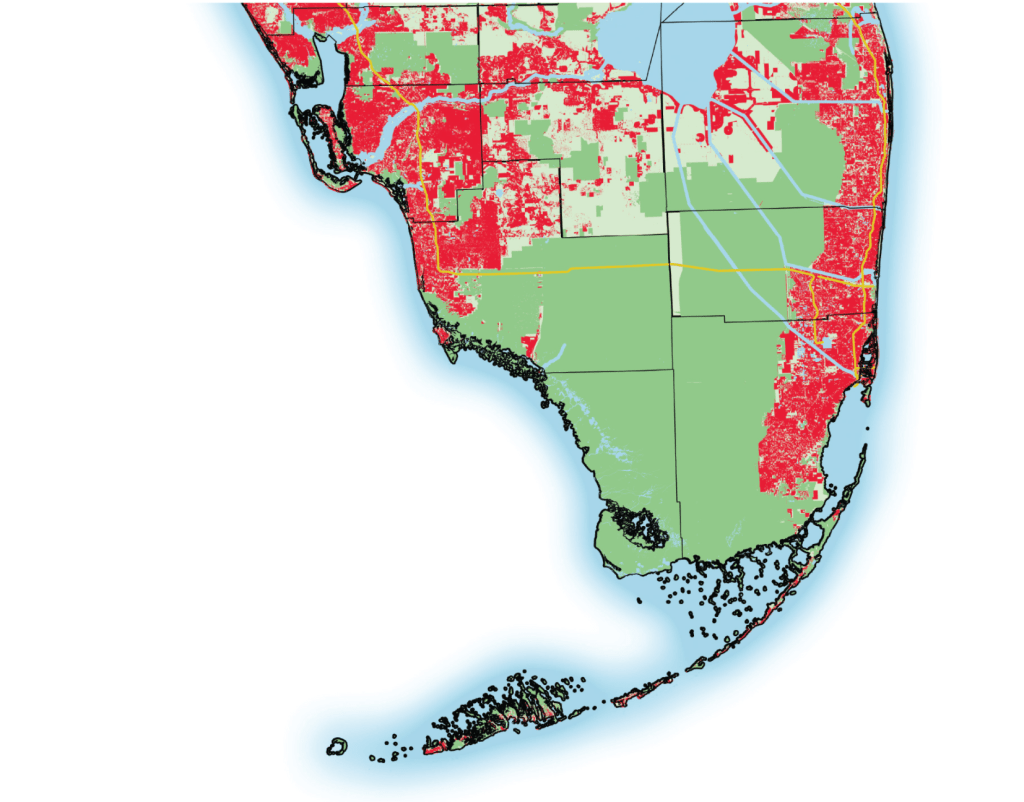

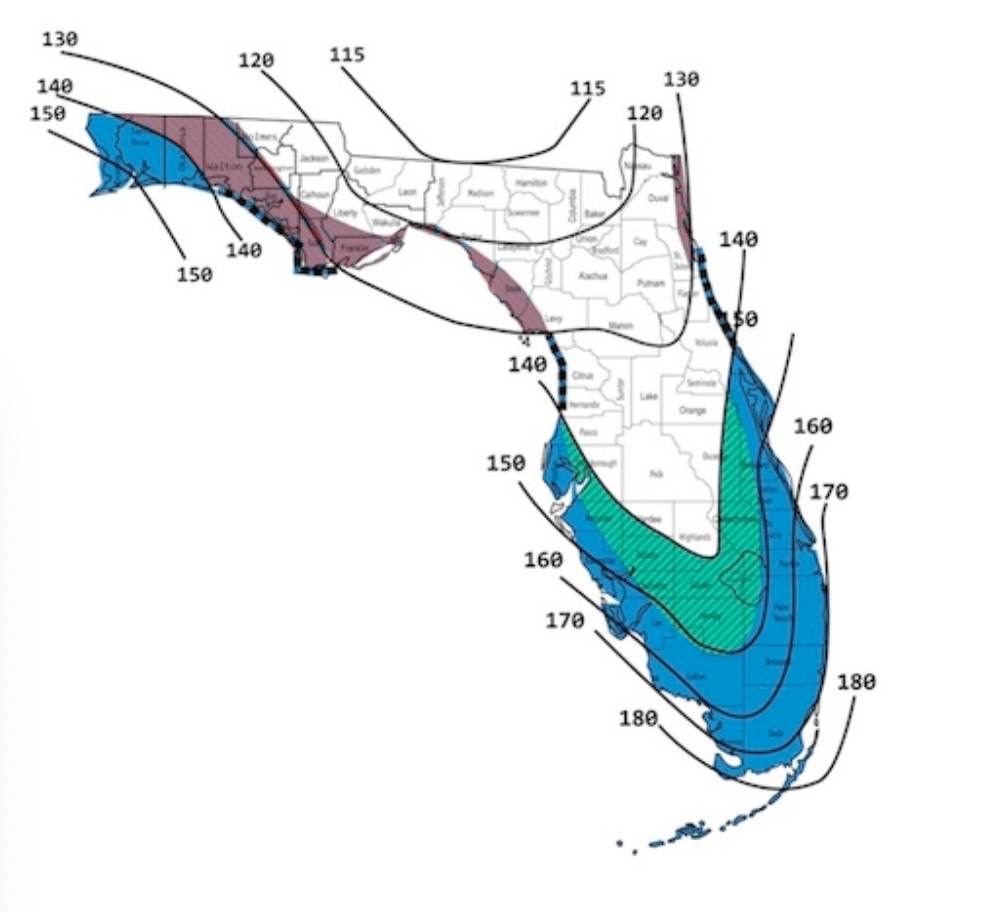

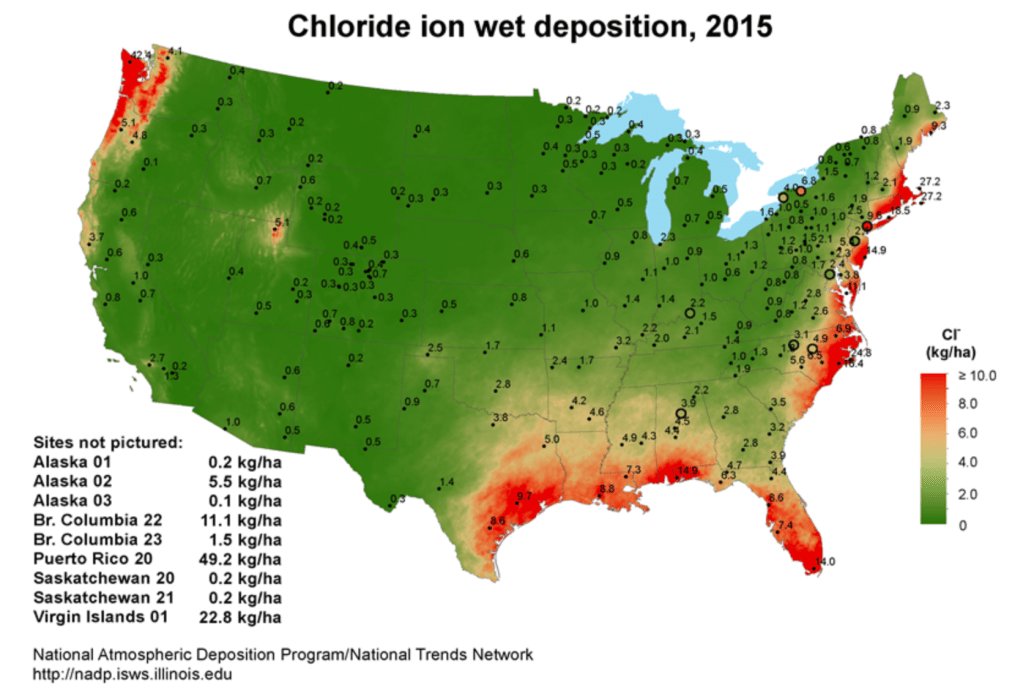

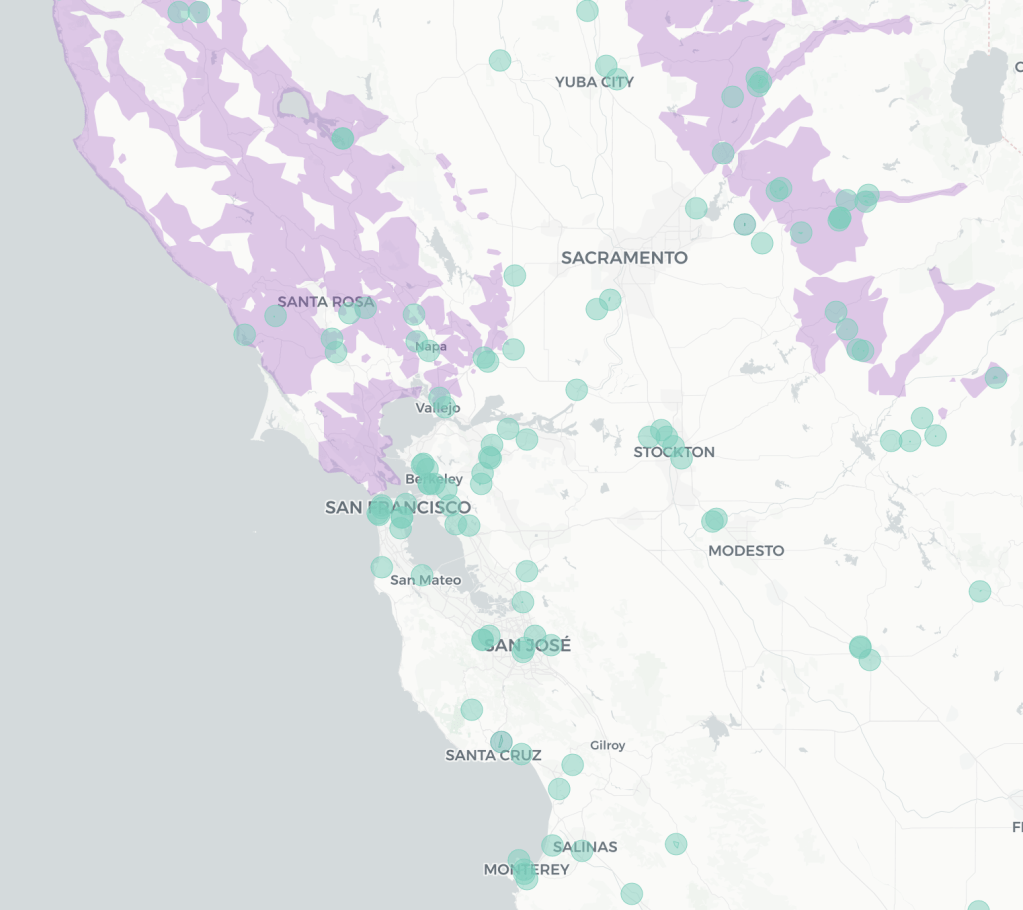

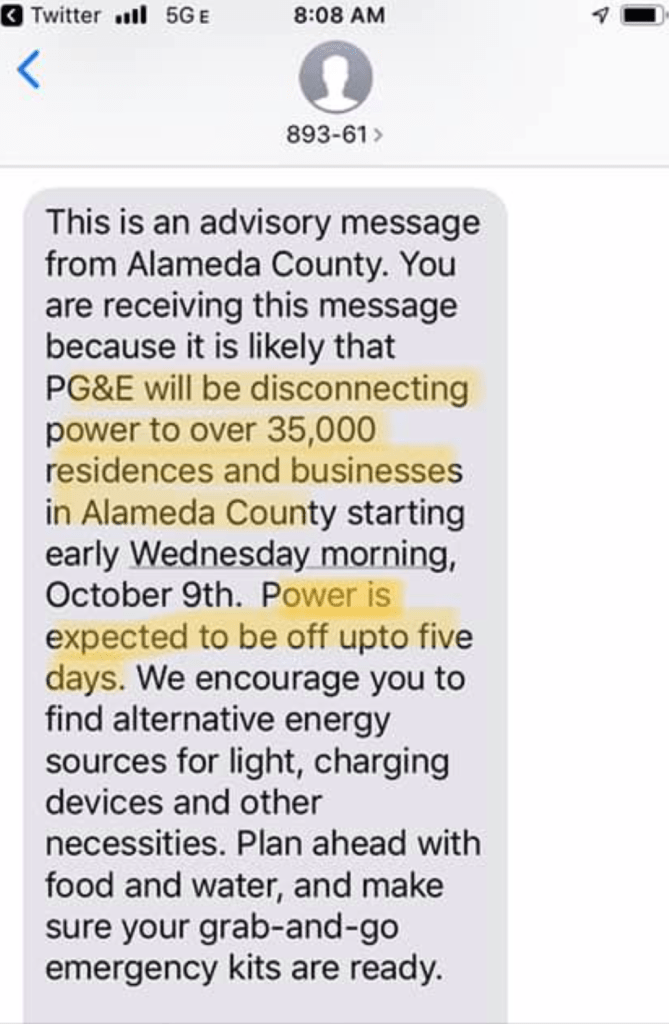

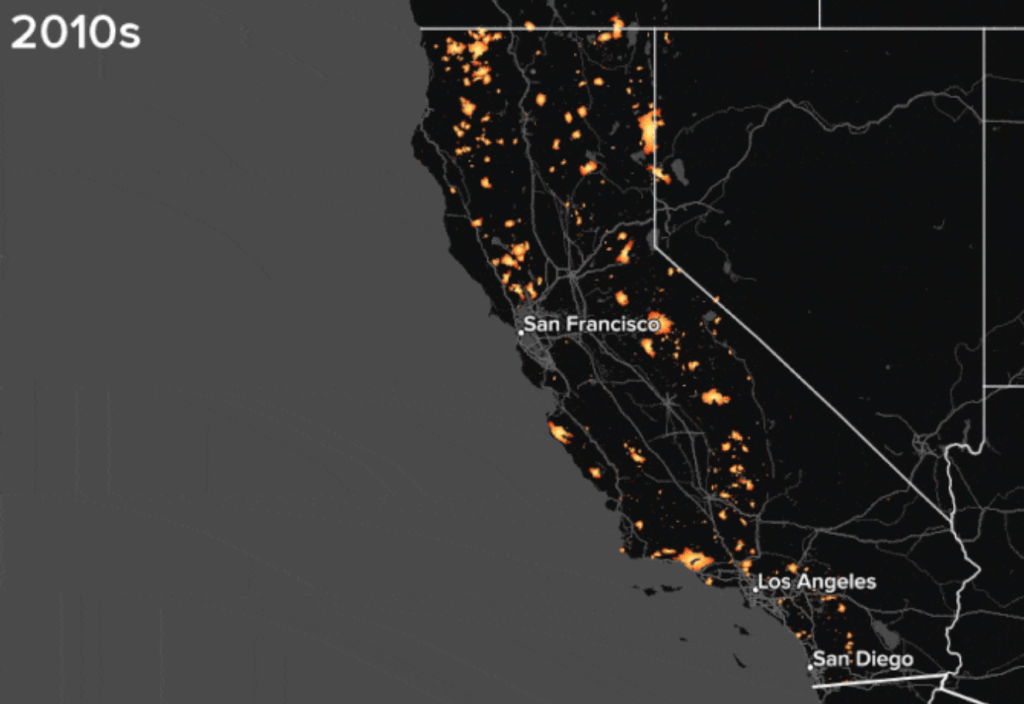

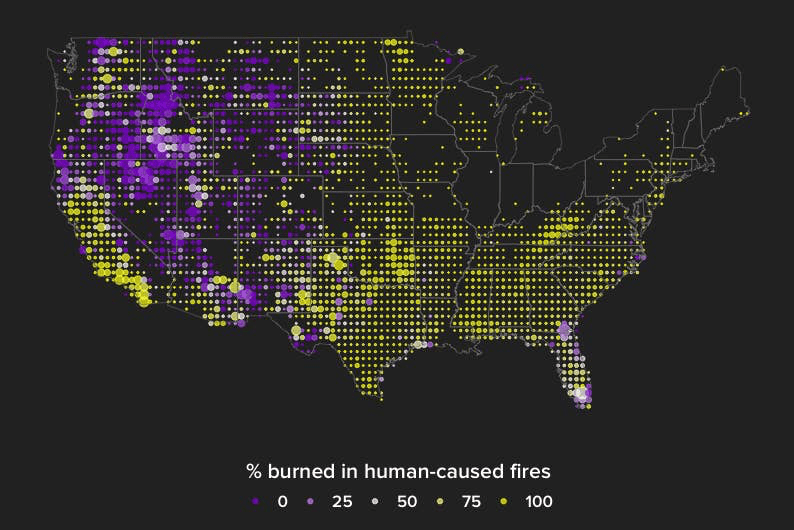

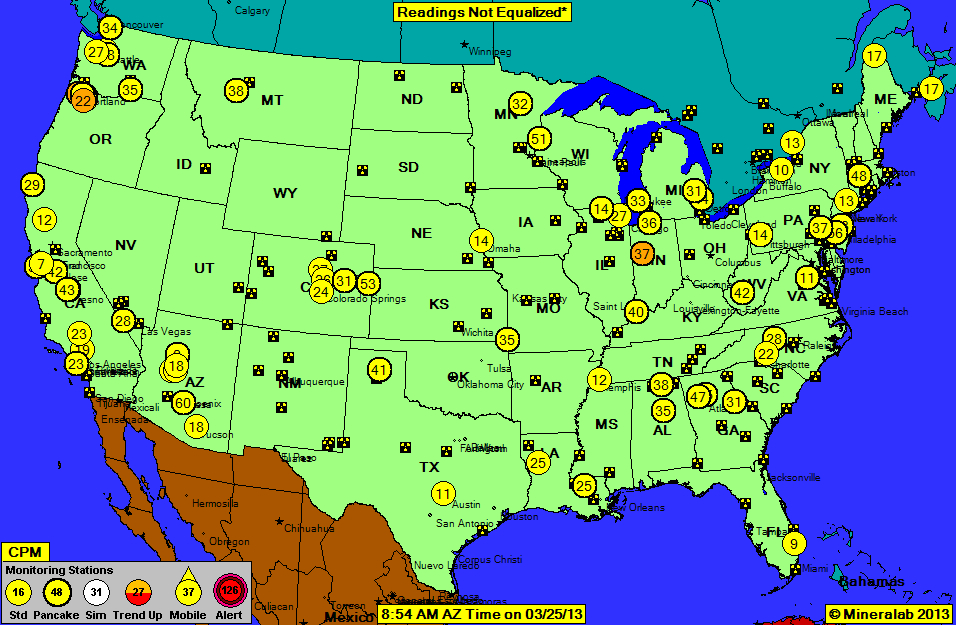

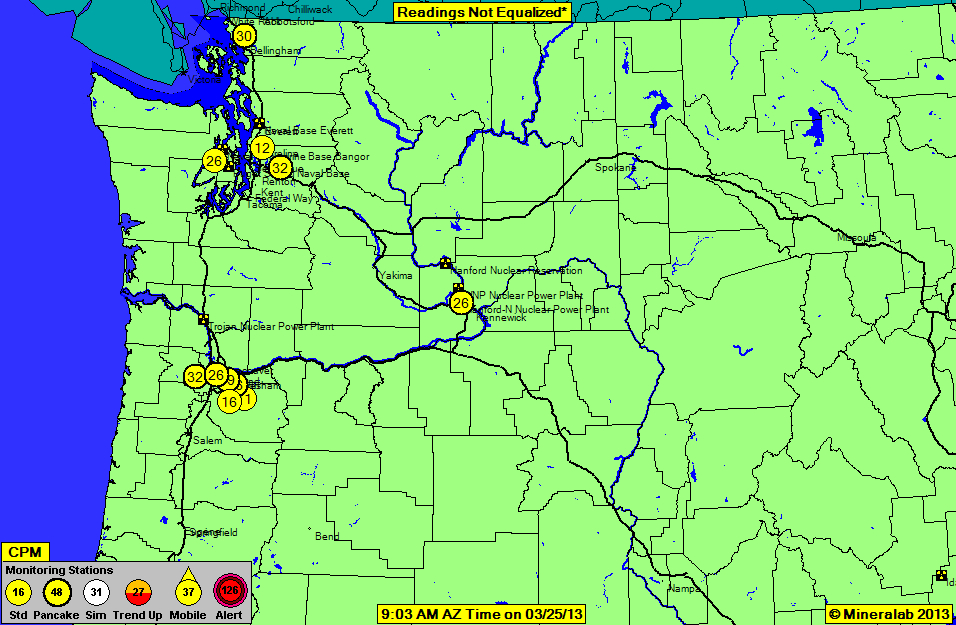

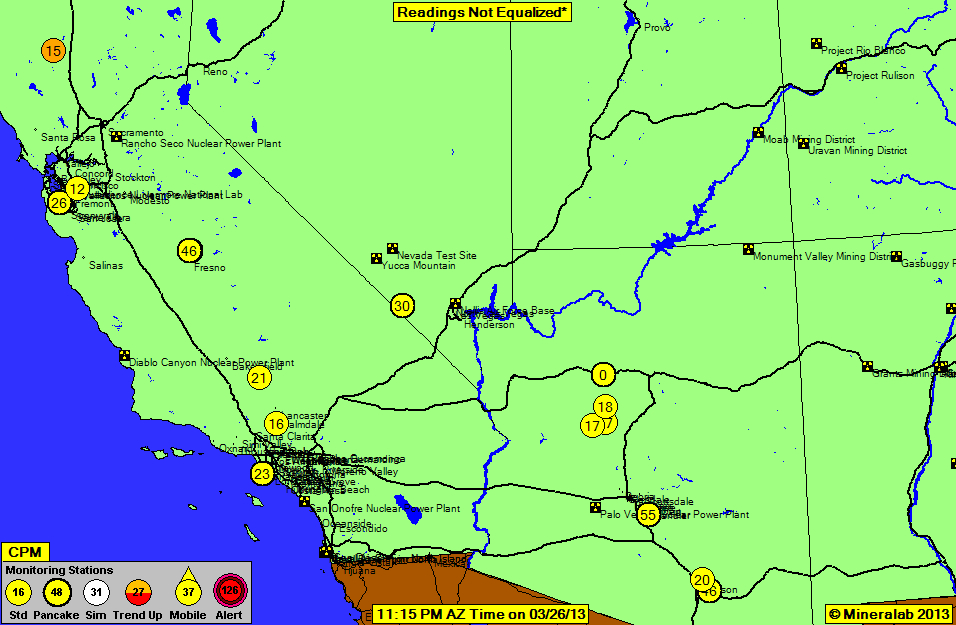

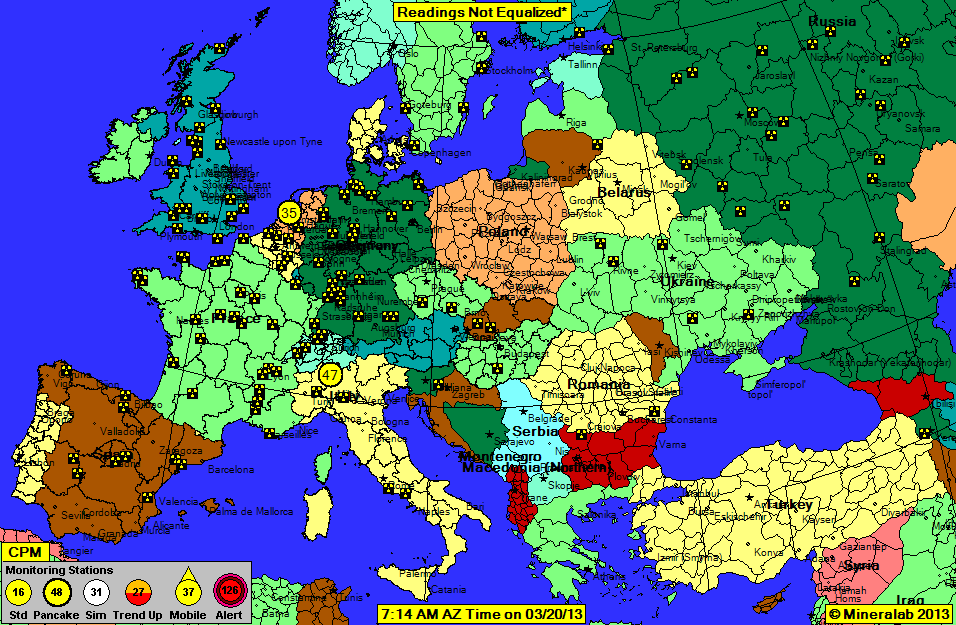

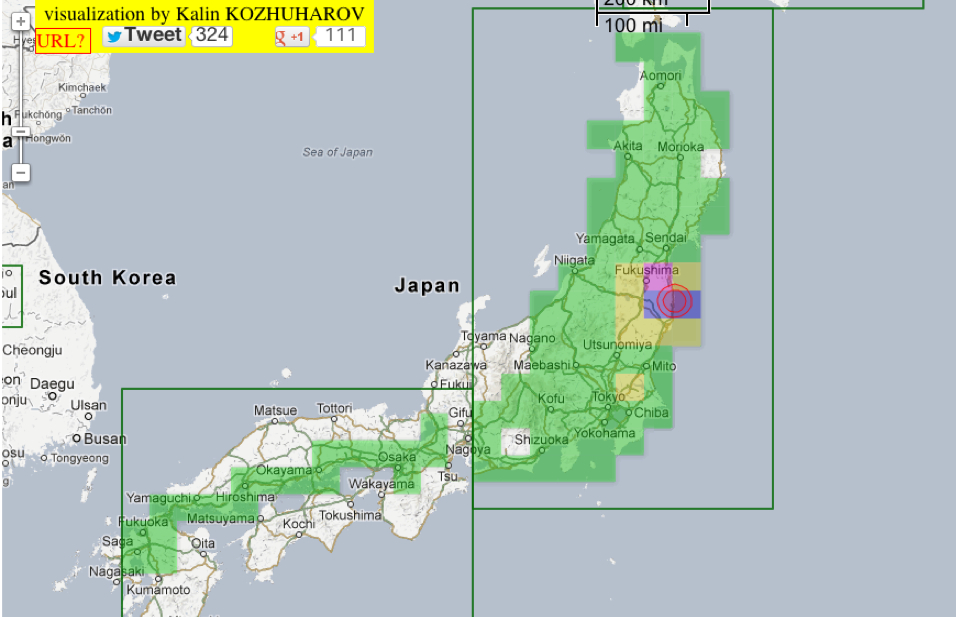

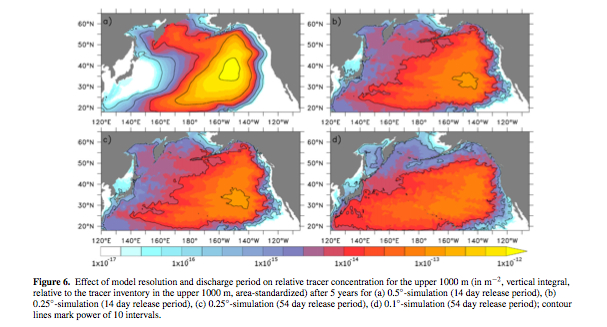

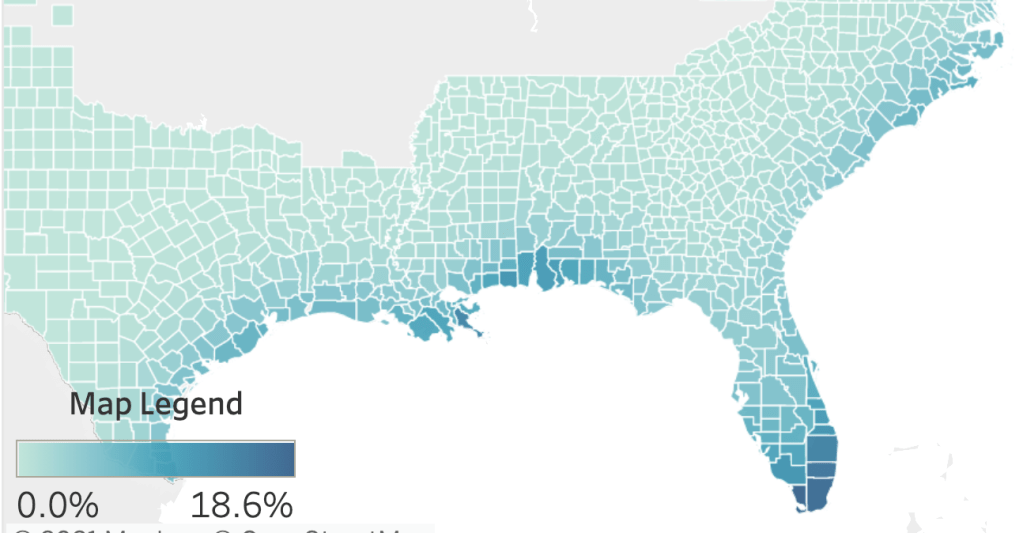

The bright red of coasts in the below map seems to evoke a danger sign that is intended to warn viewers about heightened increased consumer risk, from the Gulf Coast to Portland to Florida to the northeast, as sustained exposure to corrosive salt increased risk to over-inhabited coasts, particularly for those renting or owning homes in concrete structures built for solid land but lying in subsiding areas along a sandy beach. Indeed, building codes have since 2010 depended on the gustiness of winds structures would have to endure and not only along the coast, as this visualization of minimum standards across the state–mandating the risks coastal housing needs to endure–a green cross-hatched band marking new regions added to endure 700-year gusts of wind, inland from Miami.

Florida received a low grade for its infrastructure from the incoming administration of President Biden–he gave the state a “C” rather grudgingly on the nation’s report card as he promoted the American Jobs Plan in April, focussing mostly on the poor condition of highways, bridges, transit lines, internet access, and clean water. The shallow karst of the Biscayne aquifer is a huge threat to the drinking supplies of the 2.5 million residents of Miami-Dade County, but the danger of residences has been minimized, it seems, by an increasingly profitable industry of coastal building and development. While incursion of saltwater inland remains a threat to potable water, the structural challenges of the new As coastal Floridians have been obsessed with working on pumps to empty flooded roads to offshore drains, clearing sewer mains, and moving to higher grounds, the anthropogenic coastal architecture of towering condominiums offering oceanfront views have been forgotten as a a delicate link whose foundations and piles bear the brunt of the ecotonal crossfire of high winds, saltwater, and salty air that contributed to the “abundant cracking” of concrete that is not meant to withstand saltwater breezes, underground incursion, or the danger of coastal sinkholes in the sandy wetlands where they are built.

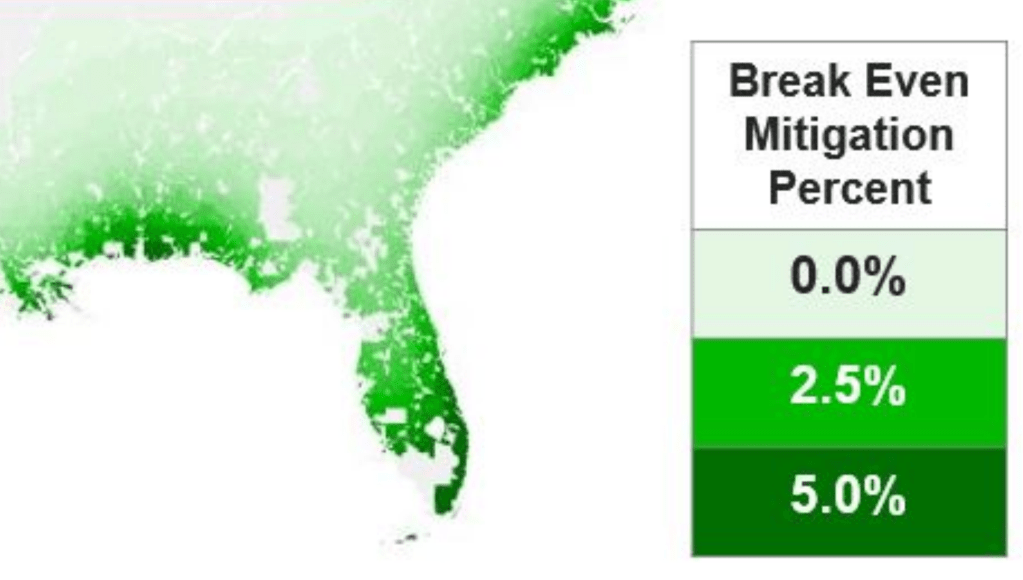

It is hard to look without wincing at a visualization designed to chart cost effectiveness by which enhanced concrete would mitigate the damage of hurricanes and extreme weather to coastal communities.

The below national map colors much the entire eastern and southeastern seaboard red, as a wake-up call for the national infrastructure. In no other coastal community are so many concrete structures so densely clustered than Florida. If designed and engineered for land, they are buffeted by salty air on both of its shores, from the Gulf of Mexico and the Atlantic; wind speeds and currents make the coast north of Miami among the saltiest in the world–as high winds can deliver atmospheric salts at a rate of up to 1500 mg/meter, penetrating as afar as one hundred miles inland that will combine with anthropogenic urban pollutants from emissions to construction–creating problems of coastal erosion of building materials, as much as the erosion of beaches and coast ecosystem threatened in Miami by what seems ground zero in sea-level rise, and, as a result, by saltwater incursion, and indeed the atmospheric incursion of salty air–concentrations of chloride that is particularly corrosive to concrete.

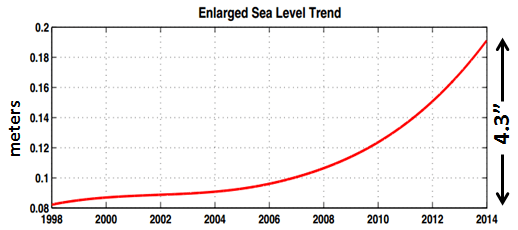

And is the exposure of concrete structures across southern Florida to salty air destined to increase with trends of rising sea-levels, already approaching five inches, and projected to deviate even more from the historical rate along the coast, exposing anthropogenic structures from skyscrapers to residences to increased flow of saltwater air?

We are all mourning the collapse of the Surfside FL condominium whose concrete pillars were so cracked and crumbling to expose rusted rebar exposed to salt air. Built on a sandbar’s wetlands, reclaimed as prime property, the town seems suddenly as susceptible to structural risk akin to earthquakes, posing intimations of mortality fit for an era of climate change. The collapse of the southern tower in the early morning of pose questions of liability after the detection of the cracked columns, “spalling” in foundational slabs of cement that allowed structural rebar within to deteriorate with rust that will never sleep. Its collapse poses unavoidable questions of liability for lost lives and unprecedented risk of the failure to respond to concrete cracking, but the ecotonal nature of the Florida shore, whose stability has been understood only by means of a continuing illusion as a clear division between land and sea, as if to paper over the risk of a crumbling shore, where massive reconstruction projects on its porous limestone expose much of the state to building risk of sinkholes and the sudden implosion or subsidence of the sandy shore in a county that was predominantly marshlands, and the inland incursion of salty air that make it one of the densest sites of inland chloride deposition–up to 8.6 kg/ha, or 860 mg/sq meter–and among the most corrosive conditions for the coastal construction of large reinforced concrete buildings facing seaward.

While coastal subsidence may have played a large role in the sudden instability of the foundations that led the flat concrete slab on which the pool to crack, and leak water into the building’s garage in the minutes before it collapsed, the question of liability for the sudden death of Surfside residents must be amply distributed. For the question of liability can be pinned to untimely review process, uncertainty over the distribution of costs for repairs to condominium residents, and the failures of proper waterproofing of concrete as well as a slow pace of upkeep or repairs, the distributed liability raises broad questions of governance of a coastal community. The proposed price of upkeep of facade, inadequate waterproofing, and pool deck of $9.1 million were staggering, but the costs of failure to prevent housing collapse are far higher–and stand to be a fraction of needed repairs for buildings across Miami-Dade County over time.

The abundance of concrete towers in Miami-Dade county alone along the coast poses broad demands of hazard mitigation for which the Surfside tragedy is only the wake-up call: the calls from experts in concrete sustainability at MIT’s Concrete Sustainability Hub (CSHUB) for a reprioritization of preparation for storms from the earliest stages of building design has called fro changes in building codes that respond to the need for increased buffeting of coastal concrete buildings, arguing that buildings should be designed with expectations of increased damage on the East and Gulf coasts that argue mitigation should begin from the redesign of cement by a better understandings of the stresses in eras of climate change that restructuring of residential buidings could greatly improve along the Florida coast–especially the hurricane-prone and salt incursion prone areas of Miami-Dade county both by the design of cement by new technologies and urban texture to allow buildings to sustain increased winds, flooding, and salt damage. Calculated after the flooding of Galveston, TX, the calculation of a “Break Even Mitigation” of investing in structural investment of enhanced concrete was argued to provide “disaster proof” homes, by preventing roof stability and insulation, as well as preventing water entry, and saltwater corrosion in existing structures, engineering concrete that is more disaster resistant fro residential buildings in ways that over time would mitigate meteorological damage to homes to be able to pay for themselves over time; the “Break-Even Mitigation Percent” for residential buildings alone was particularly high, unsurprisingly, along the southern Florida coast.

MIT Concrete Sustainability Hub (CSHub)

1. Although discussion of causes of its untimely collapse has turned on the findings of “spalling,” ‘abundant” cracking and spiderwebs leading to continued cracks in columns and walls that exposed rebar to structural damage has suggested that poor waterproofing exposed its structure to structural damage, engineers remind us that the calamity was multi-causal. Yet it is hard to discount the stresses of shifting ecotones of tides, salty air, and underground seepage, creating structural corrosion that was exacerbated by anthropogenic pollution. The shifting ecotones create clear surprises for a building that seems planned to be built on solid ground, but was open to structural weaknesses not only from corrosion of its structure but to be sinking into the sandy limestone on which it was built–opening questions of risk that the coastal communities of nation must be waking up to with alarm, even as residents of the second tower are not yet evacuated, raising broad questions of homeowner and consumer risk in a real estate market that was until recently fourishing.

The apparent precarity of the pool’s foundations lead us to try to map the collapsed towers in the structural stresses the forty year old building faced in a terrain no longer clearly defined as a separation between land and sea, either due to a failure of waterproofing or hidden instabilities in its foundations. And despite continued uncertainty of identifying the causes for the collapse of the towers, in an attempt to gain purchase on questions of liability, the tower’s collapse seems to reflect a zeitgeist of deep debates about certainty, the anxiety with which we are consuming current debates about origins of its collapse in errors of adequate inspection or engineering may conceal the shaky foundations of a burst of building on an inherently unstable ecotone? While we had been contemplating mortality for the past several years, the sudden collapse. of a coastal tower north of Miami seemed a wake-up call to consider multiple threats to the nation’s infrastructure. Important questions of liability and missed possibilities of prevention will be followed up, but when multiple floors of the south tower of the 1981 condominium that faced the ocean crumbled “as if a bomb went off,” under an almost full moon, we were stunned both by the sudden senseless loss of life, even after a year of contemplating mortality, and the lack of checks or–pardon the expression–safety nets to the nation’s infrastructure.

The risks residents of the coastal condominium faced seemed to lie not only in failures of inspection and engineering, but the ecotonal situation of the overbuilt Florida coast. Residents seemed victims of the difficulties of repairing structural compromises and damage in concrete housing, and a market that encouraged expanding projects of construction out of concrete unsuited to salty air. As much as sea-level rise has been turned to visualize the rising nature of risk of coastal communities that are among the most fastest growing areas of congregation and settlement, as well as home ownership, the liability of the Surfside condominium might be best understood by how risk is inherent in an ecotone of overlapping environments, where the coast is not only poorly understood as a dividing line between land and water, but where risk is dependent on subterranean incursion of saltwater and increasing exposure inland to salt air, absent from maps that peg dangers and risk simply to sea-level rise? The remaining floors of the partly collapsed tower were decided to be dismantled, but the disaster remains terrifyingly emblematic of the risks the built world faces in the face of the manifold pressures of climate change. While we continue to privilege sea-level rise as a basis to map climate change, does the sudden collapse of a building that shook like an earthquake suggest the need to better map the risks of driving piles into sandy limestone or swampy areas of coastal regions exposed to risks of underground seepage that would be open to corrosion by dispersion of salt air.

The search for the bodies of residents buried under the rubble of collapsed housing continued for almost a full week, as we peered into the open apartments that were stopped in the course of daily life, as if we were looking at an exploded diagram–rather than a collapsed building, wondering what led its foundations to suddenly give way.

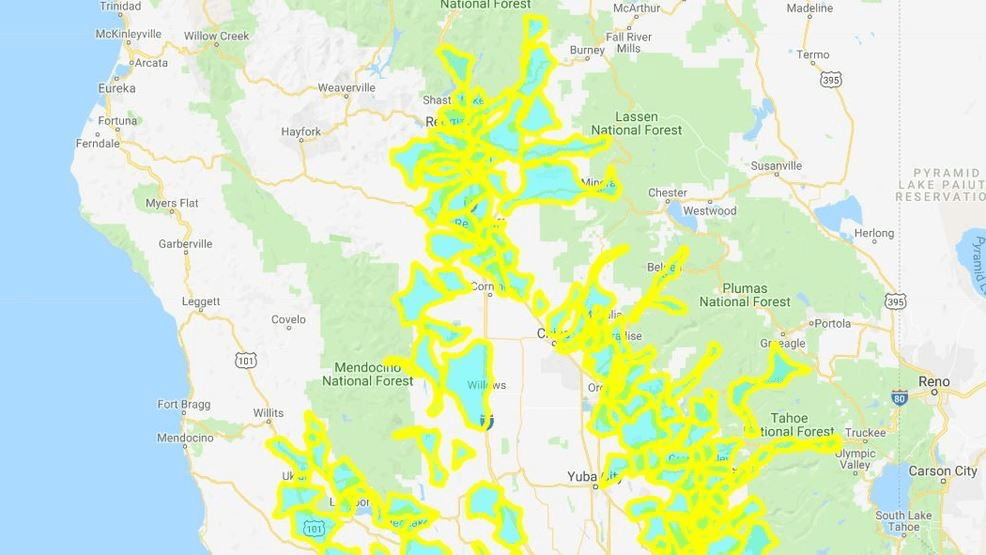

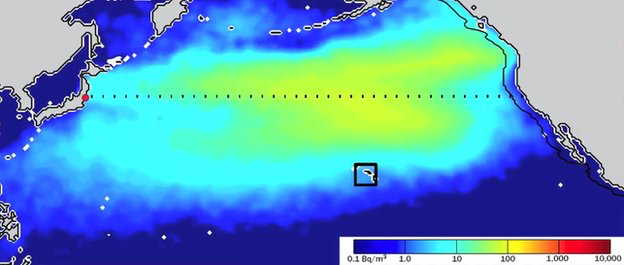

As I’ve been increasingly concerned with sand, concrete, and the shifting borders of coastal shores, it seemed almost amazing that Florida was not a a clearer focus of public attention. The striking concentration of salts that oceans deposited along the California coast seemed a battle of attrition with the consolidation and confinement of the shores. Long before Central and Southern Florida were dredged in an attempt to build new housing and real estate, saltwater was already entering the aquifer. As the Florida coast was radically reconfigured by massive projects of coastal canalization to drain lands for settlement, but which rendered the region vulnerable to saltwater, risking not only contaminating potable water aquifers, but creating corrosive conditions for concrete buildings clustered along the shores of Miami-Dade County across Fort Lauderdale, Pompano Beach, as much of Florida’s coast–both in terms of the incursion of saltwater and the flow of salty air, that link the determination of risk to the apparent multiplication of coastal ecotones by which the region is plagued, but are conceptualized often only by sea-level rise. Even as Miami experienced a rise in sea-level some six times the rate of the world in 2011-2015, inundating streets by a foot or two of saltwater from Miami to Ft. Lauderdale was probably a temporary reflection of atmospheric abnormality or a reflection of the incursion of saltwater across the limestone and sand aquifer, lying less than two meters underground. Did underground incursion of saltwater combine with inland flow of salty air in dangers beyond tidal flooding in a “hotspot” of sea-level rise? One might begin to understand Surfside, FL as prone to a confluence of ecotones, both an overlapping of saline incursion and limestone and its concrete superstructure, and the deposit of wet chloride along its buildings’ surfaces and foundations, an ecotonal multiplication of risk to the consumers of buildings that an expanding real estate market offered along its pristine shores.

While the inland expansion of saltwater incursion and penetration has become a new facet of daily life in Miami-Dade County, where saltwater rises from sewers, reversing drainage outflow to the ocean, and permeates the land, flooding streets and leaving a saltwater smell in the air, the underground penetration of saltwater in these former marshlands have been combatted for some time as if a military frontline battle, trying to beat back the water into retreat, while repressing the extent of the areas already “lost” to the sea. If the major consequences of such saltwater intrusion are a decay. and corrosion of underground infrastructure as water and sewage pipelines, rather than the deeply-set building foundations of condominiums that are designed to sustain their loads, the presence of incursion suggests something like a different temporality of the half-life of concrete structures that demands to be examined, less in terms of the damage of saltwater incursion on building integrity, than the immersion of reinforced concrete in a saline environment, by exposing concrete foundations to a wetter and saltier environment than they were built to withstand, and exposing concrete to the saline environment over time.

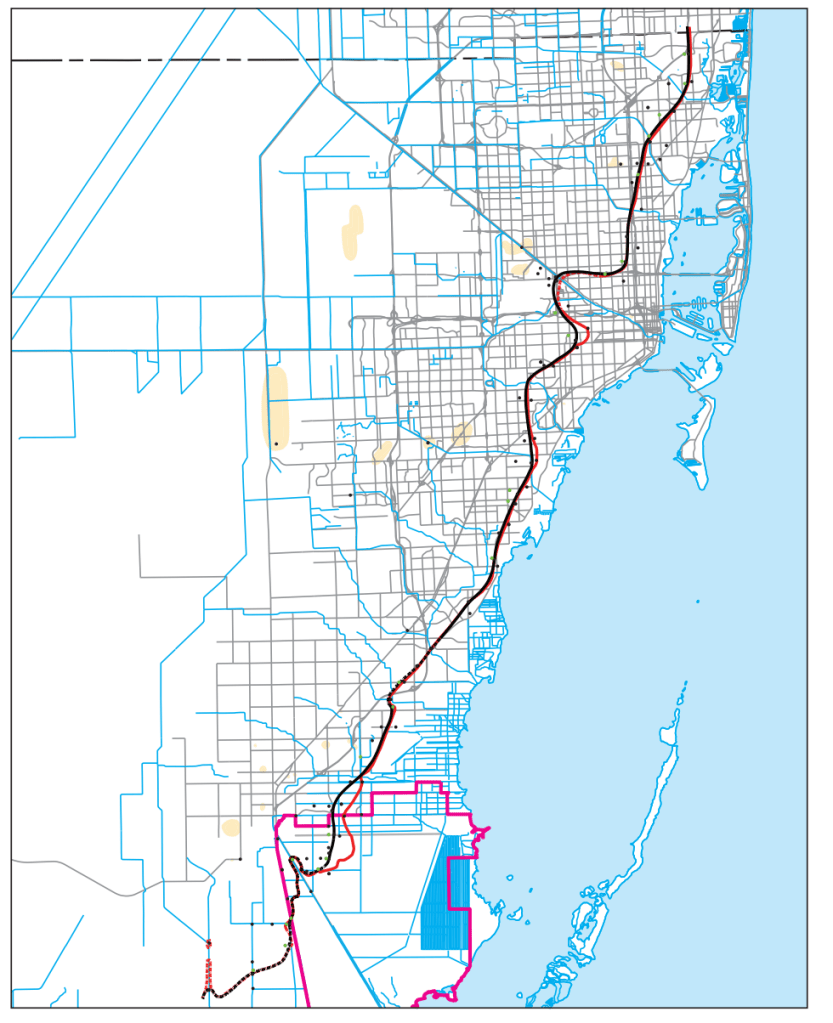

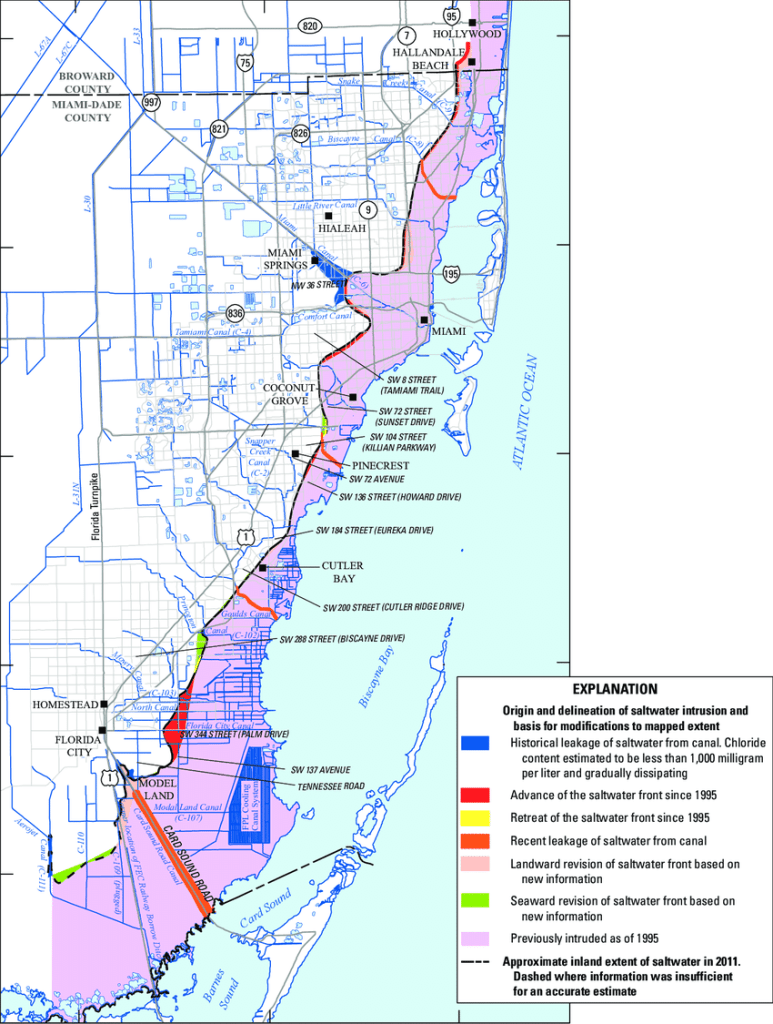

Saltwater intrusion in the Biscayne aquifer and Anthropogenic Modifications in Miami-Dade County, Florida

As much as we have returned to issues of subsidence, saltwater incursion, and other isolated data-points of potential structural weakness in the towers, the pressing question of the temporality of building survival have yet to be integrated–in part as we don’t know the vulnerability or stresses to which the concrete foundations of buildings perched on the seaside are exposed. The very expanse of the inland incursion of saltwater measured in 2011 suggests that the exposure to foundations of at least a decade of saltwater have not been determined, the risks of coastal buildings and inhabitants of the increased displacement of soils as a result of saltwater incursion or coastal construction demands to be assessed. The question of how soils will continue to support coastal structures encouraged by the interest of developers to meet demand for panoramic views of coastal beaches. While the impact of possible instability on coastal condominiums demands to be studied in medium-sized structures, the dangers of ground instability created by increased emendation of beach sand, saltwater incursion, and possible subsidence due to sinkholes. All increase the vulnerabilities of the ecotonal coastline, but only by foregrounding the increased penetration of saltwater, salt air, and soil stability in the increasingly anthropogenic coast can the nature of how ecotonal intersection of land and augment the risks buildings face.

Continue reading